Synology DS2015xs Review: An ARM-based 10G NAS

by Ganesh T S on February 27, 2015 8:20 AM EST- Posted in

- NAS

- Storage

- Arm

- 10G Ethernet

- Synology

- Enterprise

Direct-Attached Storage Performance

The presence of 10G ports on the Synology DS2015xs presents some interesting use-cases. As an example, video production houses have a need for high-speed storage. Usually, direct-attached storage units suffice. Thunderbolt is popular for this purpose - for both single-user modes as well as part of a SAN. However, as 10G network interfaces become common and affordable, there is scope for NAS units to act as a direct-attached storage units also. In order to evaluate the DAS performance of the Synology DS2015xs, we utilized the DAS testbed augmented with an appropriate CNA (converged network adapter), as described in the previous section. To get an idea of the available performance for different workloads, we ran a couple of quick artificial benchmarks along with a subset of our DAS test suite.

CIFS

In the first case, we evaluate the performance of a CIFS share created in a RAID 5 volume. One of the aspects to note is that the direct link between the NAS and the testbed is configured with a MTU of 9000 (compared to the default of 1500 used for the NAS benchmarks).

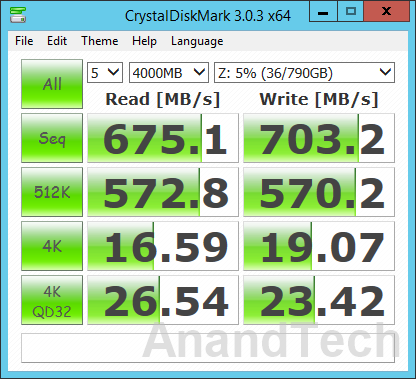

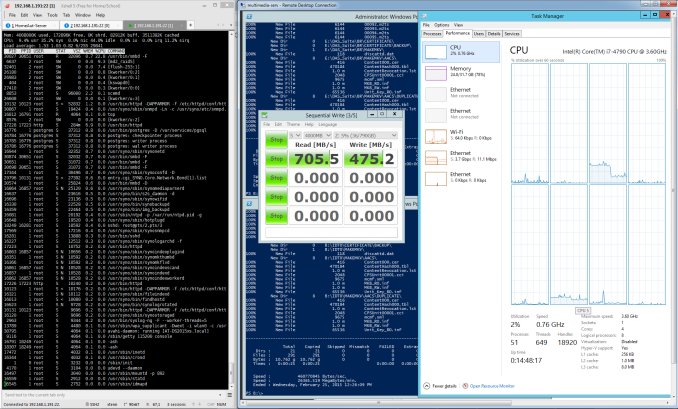

Our first artificial benchmark is CrystalDiskMark, which tackles sequential accesses as well as 512 KB and 4KB random accesses. For 4K accesses, we have a repetition of the benchmark at a queue depth of 32. As the screenshot above shows, Synology DS2015xs manages around 675 MBps reads and 703 MBps writes. The write benchmark number corresponds correctly to the claims made by Synology in their marketing material, but the 675 MBps read speeds are a far cry from the promised 1930 MBps. We moved on to ATTO, another artificial benchmark, to check if the results were any different.

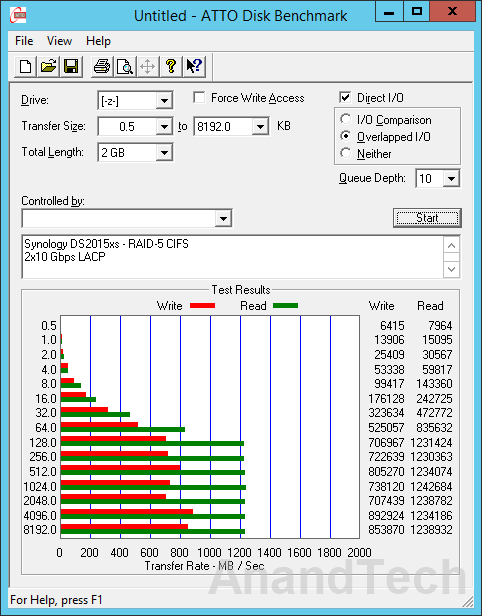

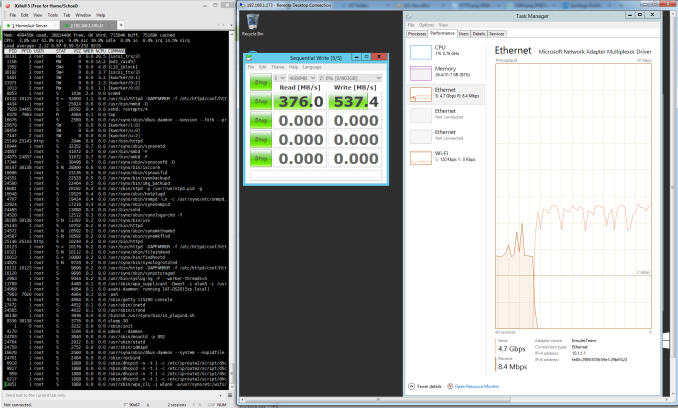

ATTO Disk Benchmark tackles sequential accesses with different block sizes. We configured a queue depth of 10 and a master file size of 4 GB for accesses with block sizes ranging from 512 bytes to 8 MB and the results are presented above. In this benchmark, we do see 1 MB block sizes giving read speeds of around 1214 MBps.

| Synology DS2015xs - 2x10 Gbps LACP - RAID-5 CIFS DAS Performance (MBps) | ||

| Read | Write | |

| Photos | 594.69 | 363.47 |

| Videos | 915.95 | 500.09 |

| Blu-ray Folder | 949.32 | 543.93 |

For real-world performance evaluation, we wrote and read back multi-gigabyte folders of photos, videos and Blu-ray files. The results are presented in the table below. These numbers show that it is possible to achieve speeds close to 1 GBps for real-life workloads. The advantage of a unit like the DS2015xs is that the 10G interfaces can be used as a DAS interface, while the other two 1G ports can connect the unit to the rest of the network for sharing the contents seamlessly with other computers.

iSCSI

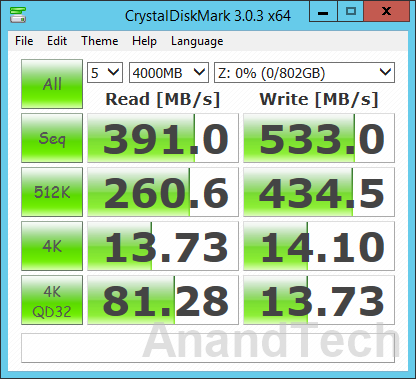

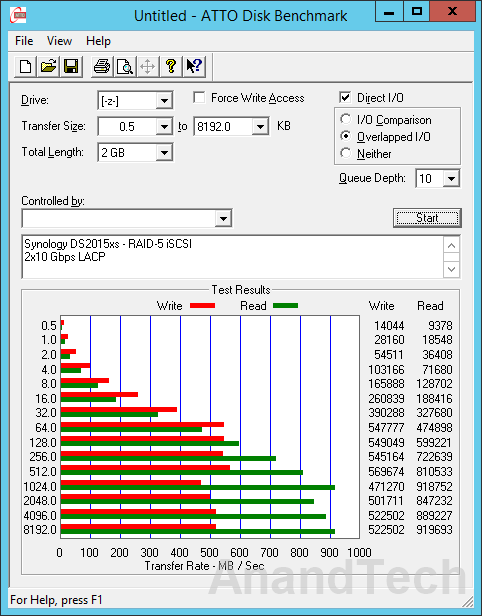

We configured a block-level (Single LUN on RAID) iSCSI LUN in RAID-5 using all available disks. Network settings were retained from the previous evaluation environment. The same benchmarks were repeated in this configuration also.

The iSCSI performance seems to be a bit off compared to what we got with CIFS. Results from the real-world performance evaluation suite are presented in the table below. These numbers track what we observed in the artificial benchmarks too.

| Synology DS2015xs - 2x10 Gbps LACP - RAID-5 iSCSI DAS Performance (MBps) | ||

| Read | Write | |

| Photos | 535.28 | 532.54 |

| Videos | 770.41 | 483.97 |

| Blu-ray Folder | 734.51 | 505.3 |

Performance Analysis

The performance numbers that we obtained with teamed ports (20 Gbps) were frankly underwhelming. The more worrisome aspect was that we couldn't replicate Synology's claims of upwards of 1900 MBps throughput for reads. In order to determine if there were any issues with our particular setup, we wanted to isolate the problem to either the disk subsystem on the NAS side or the network configuration. Unfortunately, Synology doesn't provide any tools to evaluate them separately. For optimal functioning, 10G links require careful configuration on either side.

iPerf is the tool of choice for many when it comes to ensuring that the network segment is operating optimally. Unfortunately, iPerf for DSM requires an optware package that is not yet available for the Alpine platform. On the positive side, Synology had uploaded the tool chain for Alpine on SourceForge - this helped us to cross-compile iPerf from source for the DS2015xs. Armed with iPerf on both the NAS and the testbed side, we proceeded to evaluate the links operating simultaneously without the teaming overhead.

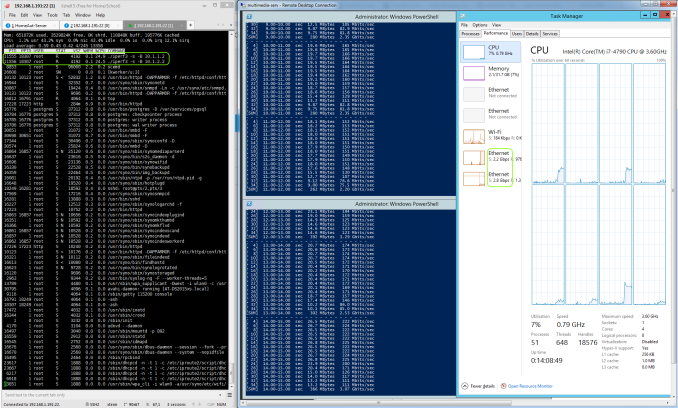

The screenshot above shows that the two links together saturated at around 5 Gbps (out of the theoretically possible 20 Gbps), but the culprit was our cross-compiled iPerf executable (with each instance completely saturating one core - 25% of the CPU).

In the CIFS case, the smbd process is not multi-threaded, and this severely affects the utilization of the 10G links fully.

In the iSCSI case, the iscsi_trx process also seems to saturate one CPU core, leading to similar results for 10G link utilization.

On the whole, the 10G links are getting utilized, but not to the full possible extent. The utilization is definitely more than, say, four single GbE links teamed together, but the presence of two 10G links had us expecting more from the unit as a DAS.

49 Comments

View All Comments

Dug - Saturday, February 28, 2015 - link

Actually RAID 10 is used far more than RAID 5 or 6. With RAID 5 actually not even being listed as an option with Dell anymore.The random write IOPS loss from RAID6 is not worth it vs RAID10.

Rebuild times are 300% faster with RAID10.

The marginal cost of adding another pair of drives to increase the RAID10 array would be easier than trying to increase IO performance later on a RAID6 array.

But then again, this is mostly for combining os, apps, and storage (VM). For just storage, it may not make any difference depending on the how many users or application type.

SirGCal - Sunday, March 1, 2015 - link

That's missing the point entirely. If you lose a drive from each subset of RAID10, you're done. It's basically a RAID 0 array, mirrored to another one (RAID 1). You could lose one entire array and be fine, but lose one disk out of the working array and you're finished. The point of RAID 6 is you can lose any 2 disks and still operate. So most likely scenario is you lose one, replace it and the rebuild is going and another fails.RAID0 is pure performance, RAID1 is drive for drive mirroring, RAID10 is a combination of the two, RAID 5 offers one drive (any) redundancy. Not as useful anymore. RAID 6 offers two. The other factor is you lose less storage room with RAID 6 then RAID 0. More drive security, less storage loss. More overhead sure but that's still nothing for the small business or home user's media storage. So, assuming 4TB drives x 8 drives... RAID 6 = 24TB or usable storage space (well, more like 22 but we're doing simple math here). RAID 10 = 16TB. And I'm all about huge storage with as much security as reasonably possible.

And who gives a crap what Dell thinks anyhow? We never had more trouble with our hardware then the few years the company switched to them. Then promptly switched away a few years after.

DigitalFreak - Monday, March 2, 2015 - link

You are confusing RAID 0+1 with RAID 10 (or 1+0). http://www.thegeekstuff.com/2011/10/raid10-vs-raid...0+1 = Striped then mirrored

1+0 = Mirrored then striped

Jaybus - Monday, March 2, 2015 - link

RAID 10 is not exactly 1+0, at least not in the Linux kernel implementation. In any case, RAID 10 can have more than 2 copies of every chunk, depending on the number of available drives. It is a tradeoff between redundancy and disk usage. With 2 copies, every chunk is safe from a single disk failure and the array size is half of the total drive capacity. With 3, every chunk is safe from two-disk failure, but the array size is down to 1/3 of the total capacity. It is not correct to state that RAID 10 cannot withstand two-drive failures. Also, since not all chunks are on all disks, it is also possible that a RAID 10 survives a multi-disk failure. It is just not guaranteed that it will unless copies > 2. A positive for RAID 10 is that a degraded RAID 10 generally has no corresponding performance degradation.questionlp - Friday, February 27, 2015 - link

There's the FreeNAS Mini that can be ordered via Amazon. I think you can order it sans drives or pre-populated with four drives. I've been considering getting one, but I don't know how well they perform vs a Syn or other COTS NAS boxen.usernametaken76 - Friday, February 27, 2015 - link

iXsystems sells a few different lines of ZFS capable hardware. The FreeNAS Mini which was mentioned wouldn't compete with this unit as it is more geared towards the home user. I see this product as more SOHO oriented than consumer level kit. The TrueNAS products sold by iXsystems are much more expensive than the consumer level gear, but you get what you pay for (backed by expert FreeBSD developers, FreeNAS developers, quality support.)zata404 - Sunday, March 1, 2015 - link

The short answer is no.bleppard - Monday, March 2, 2015 - link

Infortrend has a line of NAS that use ZFS. The EonNAS Pro 850 most closely lines up with the NAS under review in this article. Infortrend's NAS boxes seem to have some pretty advanced features. I would love to have Anandtech review them.DanNeely - Monday, March 2, 2015 - link

I'd be more interested in seeing a review of the 210/510 because they more closely approximate mainstream SOHO NASes in specifications; although at $500/$700 they're still a major step up in price over midrange QNap/Synology units.It's not immediately clear from their documentation, I'm also curious if they're running a stock version of OpenSolaris that allows easy patching from Oracle's repositories, or have customized it enough to make customers dependent on them for major OS updates.

DanNeely - Monday, March 2, 2015 - link

Also of interest in those models would be performance scaling to more modest hardware, the x10 units only have baytrail based processors.