Huawei & Honor's Recent Benchmarking Behaviour: A Cheating Headache

by Andrei Frumusanu & Ian Cutress on September 4, 2018 8:59 AM EST- Posted in

- Smartphones

- Huawei

- SoCs

- Benchmarks

- honor

- Kirin 970

The Raw Benchmark Numbers

Section By Andrei Frumusanu

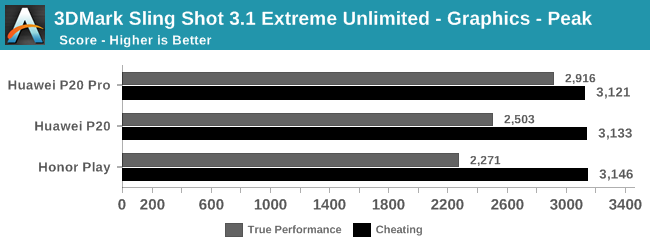

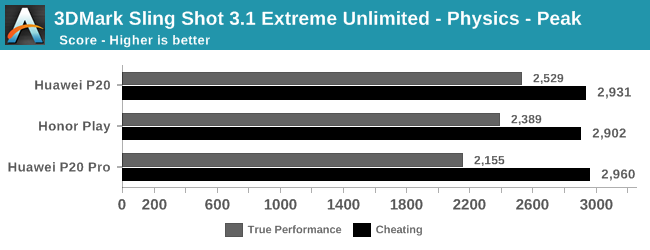

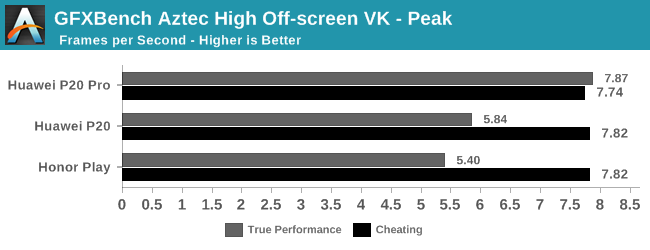

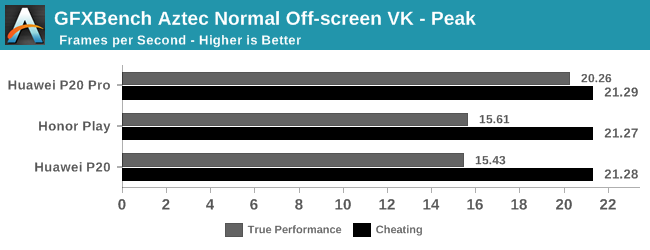

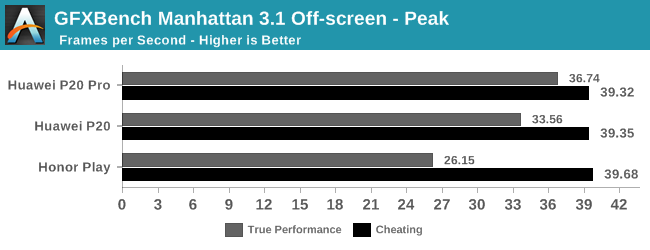

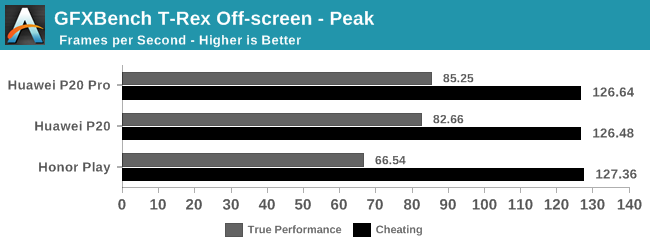

Before we go into more details, we're going to have a look at how much of a difference this behavior contributes to benchmarking scores. The key is in the differences between having Huawei/Honor's benchmark detection mode on and off. We are using our mobile GPU test suite which includes of Futuremark’s 3DMark and Kishonti’s GFXBench.

The analysis right now is being limited to the P20’s and the new Honor Play, as I don’t have yet newer stock firmwares on my Mate 10s. It is likely that the Mate 10 will exhibit similar behaviour - Ian also confirmed that he's seeing cheating behaviour on his Honor 10. This points to most (if not all) Kirin 970 devices released this year as being affected.

Without further ado, here’s some of the differences identified between running the same benchmarks while being detected by the firmware (cheating) and the default performance that applies to any non-whitelisted application (True Performance). The non-whitelisted application is a version provided to us from the benchmark manufacturer which is undetectable, and not publicly available (otherwise it would be easy to spot).

We see a stark difference between the resulting scores – with our internal versions of the benchmark performing significantly worse than the publicly available versions. We can see that all three smartphones perform almost identical in the higher power mode, as they all share the same SoC. This contrasts significantly with the real performance of the phones, which is anything but identical as the three phones have diferent thermal limits as a result of their different chassis/cooling designs. Consequently, the P20 Pro, being the largest and most expensive, has better thermals in the 'regular' benchmarking mode.

Raising Power and Thermal Limits

What is happening here with Huawei is a bit unusual in regards to how we’re used to seeing vendors cheat in benchmarks. In the past we’ve seen vendors actually raise the SoC frequencies, or locking them to their maximum states, raising performance beyond what’s usually available to generic applications.

What Huawei instead is doing is boosting benchmark scores by coming at it from the other direction – the benchmarking applications are the only use-cases where the SoC actually performs to its advertised speeds. Meanwhile every other real-world application is throttled to a significant degree below that state due to the thermal limitations of the hardware. What we end up seeing with unthrottled performance is perhaps the 'true' form of an unconstrained SoC, although this is completely academic when compared to what users actually expereience.

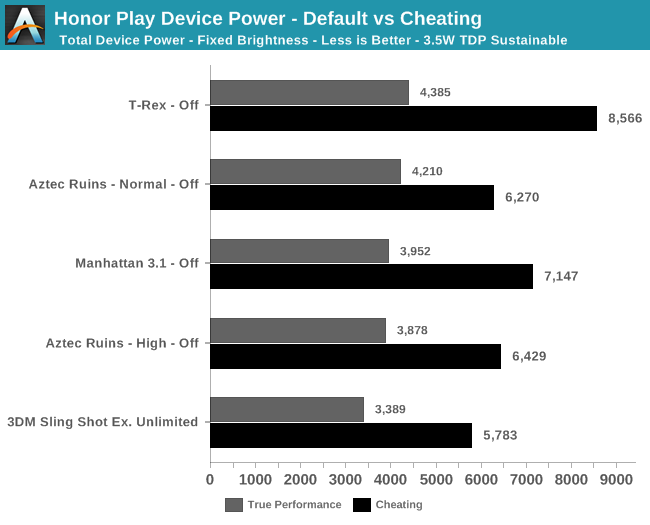

To demonstrate the power behaviour between the two different throttling modes, I measured the power on the newest Honor Play. Here I’m showcasing total device power at fixed screen brightness; for GFXBench the 3D phase of the benchmark is measured for power, while for 3DMark I’m including the totality of the benchmark run from start to finish (because it has different phases).

The differences here are astounding, as we see that in the 'true performance' state, the chip is already reaching 3.5-4.4W. These are the kind of power figures you would want a smartphone to limit itself to in 3D workloads. By contrast, using the 'cheating' variants of the benchmarks completely explodes the power budget. We see power figures above 6W, and T-Rex reaching an insane 8.5W. On a 3D battery test, these figures very quickly trigger an 'overheating' notification on the device, showing that the thermal limits must be beyond what the software is expecting.

This means that the 'true performance' figures aren’t actually stable - they strongly depend on the device’s temperature (this being typical for most phones). Huawei/Honor are not actually blocking the GPU from reaching its peak frequency state: instead, the default behavior is a very harsh thermal throttling mechanism in place that will try to maintain significantly lower SoC temperature levels and overall power consumption.

The net result is that that in the phones' normal mode, peak power consumption during these tests can reach the same figures posted by the unthrottled variants. But the numbers very quickly fall back in a drastic manner. Here the device thottles down to 2.2W in some cases, reducing performance quite a lot.

84 Comments

View All Comments

goatfajitas - Tuesday, September 4, 2018 - link

The tech world is far to hung up on benchmarking these days. Benchmarking is like the Kardashians of tech sites. The lowest form of entertainment. :PR0H1T - Tuesday, September 4, 2018 - link

So the mainland phone makers are cheating in benchmarks as well? I know this isn't a China only thing, but seems like they're trying to grab more than what they can chew.goatfajitas - Tuesday, September 4, 2018 - link

I am saying the tech world in general is far to hung up on it. Companies, tech sites and their visitors - so hung up on it and its perceived importance that companies pull crappy moves to appear to benchmark better.MonkeyPaw - Tuesday, September 4, 2018 - link

Maybe 5-10 years ago, such benchmarks were important, as the performance gain was quite noticeable. However, now I think we are well beyond the point of tangible gains on a smartphone, at least until the time that we expect more from the devices than the current usage model.niva - Tuesday, September 4, 2018 - link

I'm not sure how you don't notice 10-40% improvements in peak performance and efficiency between generations, gains are very tangible for everyone, in multiple ways, regardless of the usage model. Even if you just use your phone for making actual phone calls, you can notice the standby time increase, better radio reception, ability to answer calls while on LTE or wireless only. Maybe YOU don't notice these things, but please speak for yourself. Thank you!As for Huawei, the company is shady beyond belief. I consider the Nexus 6p the only Huawei phone I've ever wanted to get. I don't trust them, not one bit. Then again I don't trust Google either but Google seems to be an unavoidable evil I have to live with, and I do trust them quite a bit more than Huawei.

Samus - Wednesday, September 5, 2018 - link

Benchmarks are always important. If a customer is shopping for a device based on performance, the metric they have to depend on is that measured by...benchmarks.Flunk - Tuesday, September 4, 2018 - link

Those brands aren't being sold here, so it's more of a deflection than a real answer. The only other recent example of this problem is the OnePlus 5, which is another Chinese phone. All Huawei is doing is making Chinese brands look bad.techconc - Friday, September 21, 2018 - link

No, it's is normal behavior for a heavy GPU test to peak initially and then throttle back down as thermal limitations are reached. What Huawei is doing is ignoring those thermal limitations and actually overheating devices for specifically named benchmarks.kirsch - Tuesday, September 4, 2018 - link

They very well may be. But that is a completely orthogonal discussion to companies cheating to show better results than they should.Reflex - Tuesday, September 4, 2018 - link

This right here. A lot of us would agree that benchmark results are not the end all/be all of a device. But in no way is that an appropriate response to an article about benchmark cheating.