AMD's Radeon Software Crimson Driver Released: New Features & A New Look

by Ryan Smith & Daniel Williams on November 24, 2015 8:00 AM ESTUnder The Hood: DirectX 9, Shader Caching, Liquid VR, and Power Consumption

Alongside AMD’s driver branding changes, Radeon Software Crimson Edition 15.11 also marks the first release of AMD’s major new driver branch, 15.300. Consequently this driver release comes with a number of feature improvements under the hood, a number of which work in conjunction with AMD’s control panel update.

DirectX 9: Frame Pacing, CF Freesync, & Frame Rate Target Control

We’ll start off our look under the hood of Crimson with AMD’s improvements for that old standard of graphics APIs: DirectX 9. While Microsoft has moved on from DirectX 9 and the last Windows OS limited to DX9 was discontinued in 2014, DirectX 9 became firmly entrenched in development circles over its nearly decade-long run, more so than I expect anyone was really expecting. Though console-quality AAA games have since switched over to DirectX 11, “lightweight” mass market games have stuck with DirectX 9, particularly team games like the MOBAs and Rocket League, where even new titles like Dota 2 Reborn use DX9 by default.

Although AMD doesn’t share every last aspect of their internal plans with us, I get the impression that AMD expected to be done with DX9 around 2013, when even the original DX11 cards were approaching 4 years old. Since that time AMD has rolled out several features that require per-API support such as their multi-GPU frame pacing improvements, CrossFire Freesync, and frame rate target control, none of which initially supported DX9.

However with Crimson AMD is doing some backtracking and at long last is adding support for these features when used in conjunction with DirectX 9. This means that it’s now possible to use AMD’s various framerate technologies – frame pacing, CrossFire Freesync, and frame rate target control (FRTC) – with DX9 games new and old.

Truthfully after AMD initially punted on CrossFire frame pacing support for DirectX 9 in 2013 I didn’t expect that we’d see support added at a later time (especially not two years later) so this comes as a bit of a surprise. However with DX9 games refusing to die and AMD in a position where they are heavily promoting the use of their APUs with MOBAs, AMD has a vested interest in making these games perform as well as possible. FRTC offers a more flexible alternative to v-sync for capping the frame rate, a potent option for conserving battery power on laptops. Meanwhile I suspect AMD’s CF improvements are specifically aimed at improving the Dual Graphics experience with AMD’s APUs, which has always been AMD’s ace in the hole for offering cheap GPU performance upgrades by allowing the APU to be used in conjunction with a cheap dGPU rather than having to disable it entirely. Otherwise there are a handful of games where these DX9 improvements will be applicable even to dGPU setups – particularly 2011’s Skyrim – however at this point in time most dGPUs should have little need for CrossFire to get good performance on legacy DX9 games.

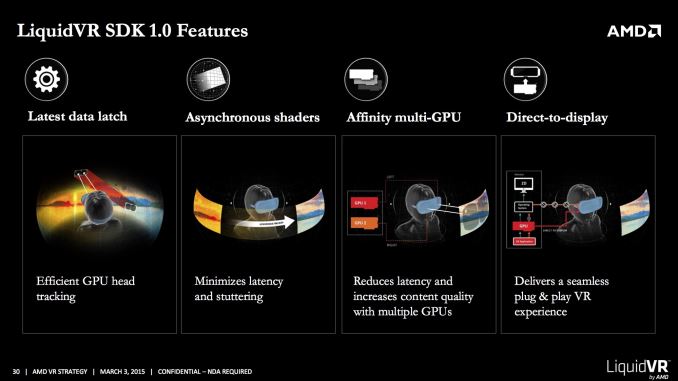

Liquid VR

Back at the 2015 Game Developers' Conference, AMD announced their LiquidVR technology. LiquidVR, in a nutshell, is AMD’s collection of virtual reality related technologies, being rolled out in preparation for the 2016 consumer launches of the Oculus Rift and HTC Vive VR headsets. LiquidVR includes AMD’s technologies for implementing efficient (and timely) last-second time warping to cut down on latency, per-eye multi-GPU rendering (allowing for near-perfect use of two GPUs), and a series of OS and driver/stack optimizations that allow VR games to bypass parts of the OS to reduce rendering latency.

At the time of their announcement AMD was just releasing the SDK to developers, as the technology was still under active development. But as of Crimson AMD is enabling the LiquidVR feature set in their consumer drivers. This won’t have any immediate ramifications since the retail headsets still aren’t here, but this will allow developers to begin final preparations for the launch of the retail headsets next year.

Shader Caching

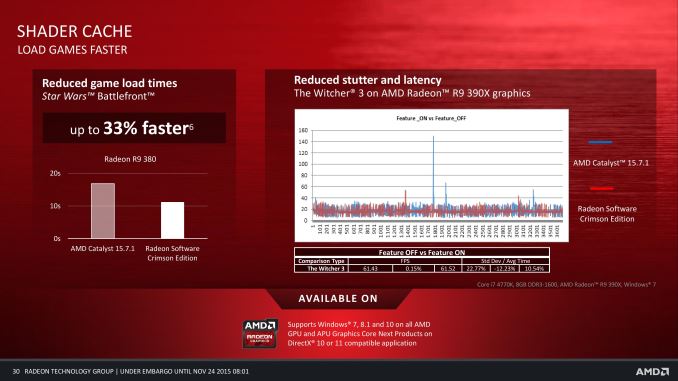

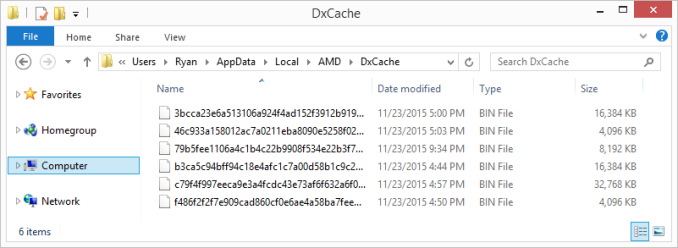

Another feature new to Crimson is shader caching. With shader caching AMD’s drivers can now transparently cache compiled game shader routines, reusing those shaders rather than recompiling them each time they’re used. DirectX lacks a universal, built-in shader caching solution, so as games have become more advanced shaders have as well, and this has increased the amount of time and resources required to compile shaders. This in turn has pretty much forced GPU vendors to implement shader caching at the driver level in order to accommodate games making poor use of shaders in order to avoid a very frustrating bottleneck. This is admittedly a case of AMD catching up with NVIDIA, but none the less it’s a welcome change.

Ultimately shader caching improves game performance in two specific areas. Games that do extensive pre-load shader compilation can now skip that compilation on future uses and reduce their overall load time, particularly on systems with slower CPUs (since shader compilation is a CPU operation). Meanwhile games that stream a large number of assets and regularly need to compile shaders on-the-fly can produce stuttering if the game needs to wait for a shader to compile before rendering the next frame, and while caching can’t eliminate the first instance of compilation, it can eliminate stuttering caused by successive loads of a shader.

The overall performance impact of shader caching will depend on the individual game used, and the speed of a system’s CPU. Checking Battlefield 4 and Crysis 3 didn’t show any improvements, while AMD notes that in their testing they’ve found Star Wars: Battlefront and Bioshock: Infinite to measurably benefit from caching.

Flip Queue Size

Filing this one under the “secret sauce” category, AMD tells us that they have made some changes to reduce the size of the DirectX flip queue. The flip queue is a data structure responsible for storing rendered frames, with the idea being that a game should queue up some frames to help ensure steady frame delivery. A short flip queue makes frame pacing more susceptible to being disrupted by frames that take too long (and otherwise makes inconsistent performance more likely) while a larger queue introduces additional lag.

AMD isn’t telling us what exactly they have done with the flip queue, however they note with Crimson that the size has been reduced. This is a fully transparent option – there isn’t a Radeon Settings option for it for users to access – so all users get it by default. AMD tells us that their changes have been implemented to reduce lag in games (particularly MOBAs) and shouldn’t impact framerate stability, but we don’t know just what they have done. AMD’s slide does imply that the queue has been reduced from 3 frames to 1, but these slides shouldn’t be taken as technical specifications as they’re primarily for communication/conceptual purposes.

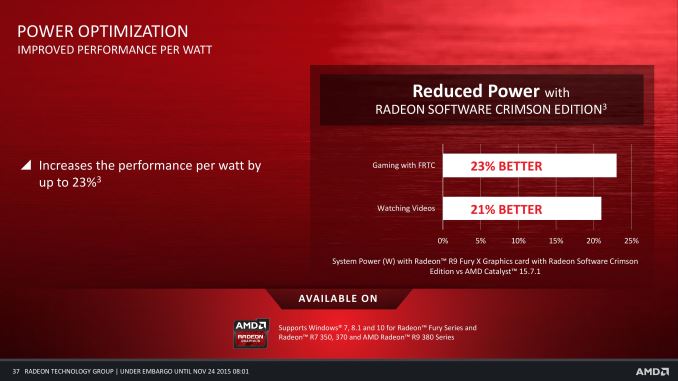

Video Decode Power

Finally, another unexpected item in AMD’s change list was a mention that they have reduced the power consumption of video decoding on some of their cards. This came as a bit of a surprise since as far as we’ve been aware, there hasn’t been a power consumption problem (and we wouldn’t think to look for one).

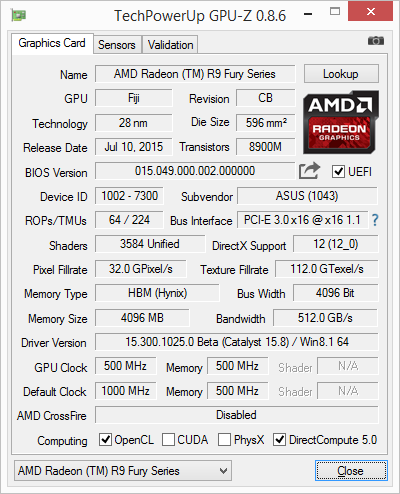

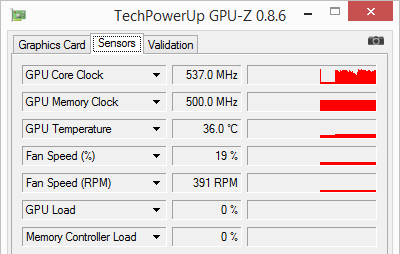

But sure enough, on our Radeon R9 Fury, playing back a 1080p H.264 video resulting in the card clocking up to between 500MHz and 700MHz depending on the display resolution. With the Crimson driver the Fury went back to staying at its idle GPU clockspeed of 300MHz, with no change in measurable performance. Meanwhile power draw at the wall went down from 83W to 78W, a small difference as a result of our high powered tested, but none the less a measurable result and one that should be greater on lower powered systems.

| 1080p H.264 Video Playback Power Consumption | |||

| Power Consumption at Wall | |||

| Idle | 76W | ||

| Catalyst 15.11.1 | 83W | ||

| Crimson 15.11 | 78W | ||

As best as we can gather, AMD had a UVD and/or desktop compositing bug on at least the Fiji cards that made them clock unnecessarily high for video playback. Frankly this seems like somewhat of a dumb thing to have to fix, but AMD correcting bugs is always appreciated.

146 Comments

View All Comments

PixelBurst - Tuesday, November 24, 2015 - link

You seem to have fallen for Nvidia's trick of 'gameready' drivers. These aren't WHQL, these are beta drivers, they just don't say the word beta et Voila, users like yourself think they are doing God's work when they aren't.AS118 - Tuesday, November 24, 2015 - link

Yeah, that's just marketing spiel. If AMD called their beta drivers "cutting edge" or "performance optimized" drivers instead of "beta", I bet more people would try them and give them less flack for not making as many WHQL drivers.I've always said that Nvidia has had better marketing than AMD, and still think they do, which is why I think that even when performance and performance / value were equal or even if AMD was superior, that Nvidia always sold more.

I always try to tell AMD to up its marketing game as much as I can, as I don't want Nvidia to ever become a monopoly and I want the GPU and CPU races to stay competitive. I say this as a former Nvidia fanboy, who realized that no company is my friend and they all want my money, and that they'd probably misbehave if they'd ever become a monopoly. AMD too, but they're the underdog right now, which is why I support them lately. If they ever did become a monopoly or near-monopoly though, that'd change, of course.

jasonelmore - Tuesday, November 24, 2015 - link

Competition in the GPU Market drives Price CutsBut, I think Technology is pushing GPU Innovation and Performance.

VR and 4K alone are pushing GPU Engineers more than competition IMO

Dalamar6 - Wednesday, November 25, 2015 - link

Try getting an AMD card working in a rolling release linux distro like Arch, and then tell me that NVidia doesn't already have a monopoly.Oh wait, you can't. Not without having to disable what makes Arch, Arch, and using 9 months outdated software. And probably smashing a few keyboards/monitor/windows/glass objects/video cards in the process.

fluxtatic - Wednesday, November 25, 2015 - link

What is AMD's interest in getting drivers working in Arch? With the 5 billion Linux distributions out there, there are, what, a few thousand people that are really mad about this? AMD's not exactly what one would call "financially successful", so they're wise to pick their battles.looncraz - Wednesday, November 25, 2015 - link

Do it all the time, what's your problem?jasonelmore - Tuesday, November 24, 2015 - link

The drivers marked WHQL are all certified, i'm not sure what your saying.xenocea - Tuesday, November 24, 2015 - link

"Overall the average performance gain at 2560x1440 is just 1%. There are a couple of instances where there are small-but-consistent performance gains – Grand Theft Auto V and Grid: Autosport stand out here – but otherwise the performance in our other games is within the margin of error, plus or minus. Not that we were expecting anything different as this never was pitched as a golden driver, but this does make it clear that more significant performance gains are going be on a per-game basis."WCCF Tech performance test shows a different story.

"To quickly summarize the results that are below, Fiji see’s the largest increase in performance across all games tested, with between a 2-20% increase actually seen. This is actually astounding. But mostly, all tiers of card seem to have increased in performance, benefiting greatly from the new Crimson driver."

http://wccftech.com/amd-radeon-software-performanc...

nathanddrews - Tuesday, November 24, 2015 - link

PCPer tested the DX9 frame pacing fix for Skyrim and it showed an astounding improvement.http://www.pcper.com/files/imagecache/article_max_...

gamervivek - Tuesday, November 24, 2015 - link

wccftech's results look too good to be true with the BF4 results where they seem to be the only ones getting such a big increase. Either something is up with their setup or they're just making stuff up.