The NVIDIA GeForce GTX Titan X Review

by Ryan Smith on March 17, 2015 3:00 PM EST

Never one to shy away from high-end video cards, in 2013 NVIDIA took the next step towards establishing a definitive brand for high-end cards with the launch of the GeForce GTX Titan. Proudly named after NVIDIA’s first massive supercomputer win – the Oak Ridge National Laboratory Titan – it set a new bar in performance. It also set a new bar in build quality for a single-GPU card, and at $999 it also set a new bar in price. The first true “luxury” video card, NVIDIA would gladly sell you one of their finest video cards if you had the pockets deep enough for it.

Since 2013 the Titan name has stuck around for additional products, although it never had quite the same impact as the original. The GTX Titan Black was a minor refresh of the GTX Titan, moving to a fully enabled GK110B GPU and from a consumer/gamer standpoint somewhat redundant due to the existence of the nearly-identical GTX 780 Ti. Meanwhile the dual-GPU GTX Titan Z was largely ignored, its performance sidelined by its unprecedented $3000 price tag and AMD’s very impressive Radeon R9 295X2 at half the price.

Now in 2015 NVIDIA is back with another Titan, and this time they are looking to recapture a lot of the magic of the original Titan. First teased back at GDC 2015 in an Epic Unreal Engine session, and used to drive more than a couple of demos at the show, the GTX Titan X gives NVIDIA’s flagship video card line the Maxwell treatment, bringing with it all of the new features and sizable performance gains that we saw from Maxwell last year with the GTX 980. To be sure, this isn’t a reprise of the original Titan – there are some important differences that make the new Titan not the same kind of prosumer card the original was – but from a performance standpoint NVIDIA is looking to make the GTX Titan X as memorable as the original. Which is to say that it’s by far the fastest single-GPU card on the market once again.

| NVIDIA GPU Specification Comparison | ||||||

| GTX Titan X | GTX 980 | GTX Titan Black | GTX Titan | |||

| CUDA Cores | 3072 | 2048 | 2880 | 2688 | ||

| Texture Units | 192 | 128 | 240 | 224 | ||

| ROPs | 96 | 64 | 48 | 48 | ||

| Core Clock | 1000MHz | 1126MHz | 889MHz | 837MHz | ||

| Boost Clock | 1075MHz | 1216MHz | 980MHz | 876MHz | ||

| Memory Clock | 7GHz GDDR5 | 7GHz GDDR5 | 7GHz GDDR5 | 6GHz GDDR5 | ||

| Memory Bus Width | 384-bit | 256-bit | 384-bit | 384-bit | ||

| VRAM | 12GB | 4GB | 6GB | 6GB | ||

| FP64 | 1/32 FP32 | 1/32 FP32 | 1/3 FP32 | 1/3 FP32 | ||

| TDP | 250W | 165W | 250W | 250W | ||

| GPU | GM200 | GM204 | GK110B | GK110 | ||

| Architecture | Maxwell 2 | Maxwell 2 | Kepler | Kepler | ||

| Transistor Count | 8B | 5.2B | 7.1B | 7.1B | ||

| Manufacturing Process | TSMC 28nm | TSMC 28nm | TSMC 28nm | TSMC 28nm | ||

| Launch Date | 03/17/2015 | 09/18/14 | 02/18/2014 | 02/21/2013 | ||

| Launch Price | $999 | $549 | $999 | $999 | ||

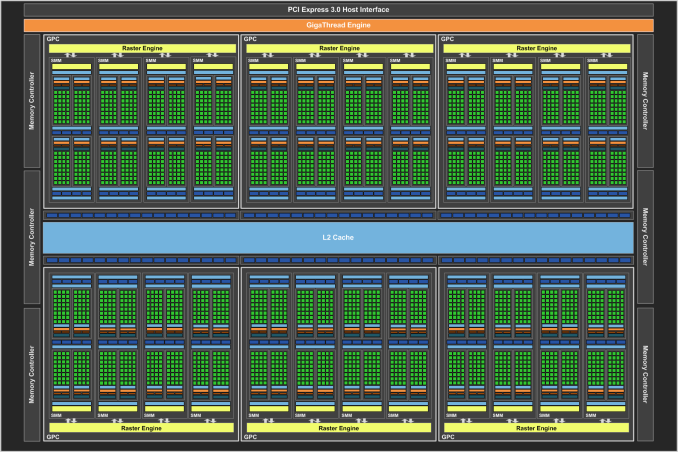

To do this NVIDIA has assembled a new Maxwell GPU, GM200 (aka Big Maxwell). We’ll dive into GM200 in detail a bit later, but from a high-level standpoint GM200 is the GM204 taken to its logical extreme. It’s bigger, faster, and yes, more power hungry than GM204 before it. In fact at 8 billion transistors occupying 601mm2 it’s NVIDIA’s largest GPU ever. And for the first time in quite some time, virtually every last millimeter is dedicated to graphics performance, which coupled with Maxwell’s performance efficiency makes it a formidable foe.

Diving into the specs, GM200 can for most intents and purposes be considered a GM204 + 50%. It has 50% more CUDA cores, 50% more memory bandwidth, 50% more ROPs, and almost 50% more die size. Packing a fully enabled version of GM200, this gives the GTX Titan X 3072 CUDA cores and 192 texture units(spread over 24 SMMs), paired with 96 ROPs. Meanwhile considering that even the GM204-backed GTX 980 could outperform the GK110-backed GTX Titans and GTX 780 Ti thanks to Maxwell’s architectural improvements – 1 Maxwell CUDA core is quite a bit more capable than Kepler in practice, as we’ve seen – GTX Titan X is well geared to shoot well past the previous Titans and the GTX 980.

Feeding GM200 is a 384-bit memory bus driving 12GB of GDDR5 clocked at 7GHz. Compared to the GTX Titan Black this is one of the few areas where GTX Titan X doesn’t have an advantage in raw specifications – there’s really nowhere to go until HBM is ready – however in this case numbers can be deceptive as NVIDIA has heavily invested in memory compression for Maxwell to get more out of the 336GB/sec of memory bandwidth they have available. The 12GB of VRAM on the other hand continues NVIDIA’s trend of equipping Titan cards with as much VRAM as they can handle, and should ensure that the GTX Titan X has VRAM to spare for years to come. Meanwhile sitting between the GPU’s functional units and the memory bus is a relatively massive 3MB of L2 cache, retaining the same 32K:1 cache:ROP ratio of Maxwell 2 and giving the GPU more cache than ever before to try to keep memory operations off of the memory bus.

As for clockspeeds, as with the rest of the Maxwell lineup GTX Titan X is getting a solid clockspeed bump from its Kepler predecessor. The base clockspeed is up to 1Ghz (reported as 1002MHz by NVIDIA’s tools) while the boost clock is 1075MHz. This is roughly 100MHz (~10%) ahead of the GTX Titan Black and will further push the GTX Titan X ahead. However as is common with larger GPUs, NVIDIA has backed off on clockspeeds a bit compared to the smaller GM204, so GTX Titan X won’t clock quite as high as GTX 980 and the overall performance difference on paper is closer to 33% when comparing boost clocks.

Power consumption on the other hand is right where we’d expect it to be for a Titan class card. NVIDIA’s official TDP for GTX Titan X is 250W, the same as the previous single-GPU Titan cards (and other consumer GK110 cards). Like the original GTX Titan, expect GTX Titan X to spend a fair bit of its time TDP-bound; 250W is generous – a 51% increase over GTX 980 – but then again so is the number of transistors that need to be driven. Overall this puts GTX Titan X on the high side of the power consumption curve (just like GTX Titan before it), but it’s the price for that level of performance. Practically speaking 250W is something of a sweet spot for NVIDIA, as they know how to efficiently dissipate that much heat and it ensures GTX Titan X is a drop-in replacement for GTX Titan/780 in any existing system designs.

Moving on, the competitive landscape right now will greatly favor NVIDIA. With AMD’s high-end having last been refreshed in 2013 and with the GM204 GTX 980 already ahead of the Radeon 290X, GTX Titan X further builds on NVIDIA’s lead. No other single-GPU card is able to touch it, and even GTX 980 is left in the dust. This leaves NVIDIA as the uncontested custodian of the single-GPU performance crown.

The only thing that can really threaten the GTX Titan X at this time are multi-GPU configurations such as GTX 980 SLI and the Radeon R9 295X2, the latter of which is down to ~$699 these days and is certainly a potential spoiler for GTX Titan X. To be sure when multi-GPU works right either of these configurations can shoot past a single GTX Titan X, however when multi-GPU scaling falls apart then we have the usual problem of such setups falling well behind a single powerful GPU. Such setups are always a risk in that regard, and consequently as a single-GPU card GTX Titan X offers the best bet for consistent performance.

NVIDIA of course is well aware of this, and with GTX 980 already fending off the R9 290X NVIDIA is free to price GTX Titan X as they please. GTX Titan X is being positioned as a luxury video card (like the original GTX Titan) and NVIDIA is none too ashamed to price it accordingly. Complicating matters slightly however is the fact that unlike the Kepler Titan cards the GTX Titan X is not a prosumer-level compute monster. As we’ll see it lacks its predecessor’s awesome double precision performance, so NVIDIA does need to treat this latest Titan as a consumer gaming card rather than a gaming + entry level compute card as was the case with the original GTX Titan.

In any case, with the previous GTX Titan and GTX Titan Black launching at $999, it should come as no surprise that this is where GTX Titan X is launching as well. NVIDIA saw quite a bit of success with the original GTX Titan at this price, and with GTX Titan X they are shooting for the same luxury market once again. Consequently GTX Titan X will be the fastest single-GPU card you can buy, but it will once again cost quite a bit to get. For our part we'd like to see GTX Titan X priced lower - say closer to the $700 price tag of GTX 780 Ti - but it's hard to argue with NVIDIA's success on the original GTX Titan.

Finally, for launch availability this will be a hard launch with a slight twist. Rather than starting with retail and etail partners such as Newegg, NVIDIA is going to kick things off by selling cards directly, while partners will start to sell cards in a few weeks. For a card like GTX Titan X, NVIDIA selling cards directly is not a huge stretch; with all cards being identical reference cards, partners largely serve as distributors and technical support for buyers.

Meanwhile selling GTX Titan X directly also allowed NVIDIA to keep the card under wraps for longer while still offering a hard launch, as it left fewer avenues for leaks through partners. On the other hand I'm curious how partners will respond to being cut out of the loop like this, even if it is just temporary.

| Spring 2015 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| $999 | GeForce GTX Titan X | ||||

| Radeon R9 295X2 | $699 | ||||

| $550 | GeForce GTX 980 | ||||

| Radeon R9 290X | $350 | ||||

| $330 | GeForce GTX 970 | ||||

| Radeon R9 290 | $270 | ||||

276 Comments

View All Comments

looncraz - Tuesday, March 17, 2015 - link

If the most recent slides (allegedly leaked from AMD) hold true, the 390x will be at least as fast as the Titan X, though with only 8GB of RAM (but HBM!).A straight 4096SP GCN 1.2/3 GPU would be a close match-up already, but any other improvements made along the way will potentially give the 390X a fairly healthy launch-day lead.

I think nVidia wanted to keep AMD in the dark as much as possible so that they could not position themselves to take more advantage of this, but AMD decided to hold out, apparently, until May/June (even though they apparently already have some inventory on hand) rather than give nVidia a chance to revise the Titan X before launch.

nVidia blinked, it seems, after it became apparent AMD was just going to wait out the clock with their current inventory.

zepi - Wednesday, March 18, 2015 - link

Unless AMD has achieved considerable increase in perf/w, they are going to have really hard time tuning those 4k shaders to a reasonable frequency without being a 450W card.Not that being a 500W is necessarily a deal breaker for everyone, but in practice cooling a 450W card without causing ear shattering level of noise is very difficult compared to cooling a 250W card.

Let us wait and hope, since AMD really would need to get a break and make some money on this one...

looncraz - Wednesday, March 18, 2015 - link

Very true. We know that with HBM there should already be a fairly beefy power savings (~20-30W vs 290X it seems).That doesn't buy them room for 1,280 more SPs, of course, but it should get them a healthy 256 of them. Then, GCN 1.3 vs 1.1 should have power advantages as well. GCN 1.2 vs 1.0 (R9 285 vs R9 280) with 1792 SPs showed a 60W improvement, if we assume GCN 1.1 to GCN 1.3 shows a similar trend the 390X should be pulling only about 15W more than the 290X with the rumored specs without any other improvements.

Of course, the same math says the 290X should be drawing 350W, but that's because it assumes all the power is in the SPs... But I do think it reveals that AMD could possibly do it without drawing much, if any, more power without making any unprecedented improvements.

Braincruser - Wednesday, March 18, 2015 - link

Yeah, but the question is, How well will the memory survive on top of a 300W GPU?Because the first part in a graphic card to die from high temperatures is the VRAM.

looncraz - Thursday, March 19, 2015 - link

It will be to the side, on a 2.5d interposer, I believe.GPU thermal energy will move through the path of least resistance (technically, to the area with the greatest deltaT, but regulated by the material thermal conductivity coefficient), which should be into the heatsink or water block. I'm not sure, but I'd think the chips could operate in the same temperature range as the GPU, but maybe not. It may be necessary to keep them thermally isolated. Which shouldn't be too difficult, maybe as simple as not using thermal pads at all for the memory and allowing them to passively dissipate heat (or through interposer mounted heatsinks).

It will be interesting to see what they have done to solve the potential issues, that's for sure.

Xenonite - Thursday, March 19, 2015 - link

Yes, I agree that AMD would be able to absolutely destroy NVIDIA on the performance front if they designed a 500W GPU and left the PCB and waterblock design to their AIB partners.I would also absolutely love to see what kind of performance a 500W or even a 1kW graphics card would be able to muster; however, since a relatively constant 60fps presented with less than about 100ms of total system latency has been deemed sufficient for a "smooth and responsive" gaming experience, I simply can't imagine such a card ever seeing the light of day.

And while I can understand everyone likes to pretend that they are saving the planet with their <150W GPUs, the argument that such a TDP would be very difficult to cool does not really hold much water IMHO.

If, for instance, the card was designed from the ground up to dissipate its heat load over multiple 200W~300W GPUs, connected via a very-high-speed, N-directional data interconnect bus, the card could easily and (most importantly) quietly be cooled with chilled-watercooling dissipating into a few "quad-fan" radiators. Practically, 4 GM200-size GPUs could be placed back-to-back on the PCB, with each one rendering a quarter of the current frame via shared, high-speed frame buffers (thereby eliminating SLI-induced microstutter and "frame-pacing" lag). Cooling would then be as simple as installing 4 standard gpu-watercooling loops with each loop's radiator only having to dissipate the TDP of a single GPU module.

naxeem - Tuesday, March 24, 2015 - link

They did solve that problem with a water-cooling solution. 390X WCE is probably what we'll get.ShieTar - Wednesday, March 18, 2015 - link

Who says they don't allow it? EVGA have already anounced two special models, a superclocked one and one with a watercooling-block:http://eu.evga.com/articles/00918/EVGA-GeForce-GTX...

Wreckage - Tuesday, March 17, 2015 - link

If by fast you mean June or July. I'm more interested in a 980ti so I don't need a new power supply.ArmedandDangerous - Saturday, March 21, 2015 - link

There won't ever be a 980 Ti if you understand Nvidia's naming schemes. Ti's are for unlocked parts, there's nothing to further unlock on the 980 GM204.