Synology DS2015xs Review: An ARM-based 10G NAS

by Ganesh T S on February 27, 2015 8:20 AM EST- Posted in

- NAS

- Storage

- Arm

- 10G Ethernet

- Synology

- Enterprise

Direct-Attached Storage Performance

The presence of 10G ports on the Synology DS2015xs presents some interesting use-cases. As an example, video production houses have a need for high-speed storage. Usually, direct-attached storage units suffice. Thunderbolt is popular for this purpose - for both single-user modes as well as part of a SAN. However, as 10G network interfaces become common and affordable, there is scope for NAS units to act as a direct-attached storage units also. In order to evaluate the DAS performance of the Synology DS2015xs, we utilized the DAS testbed augmented with an appropriate CNA (converged network adapter), as described in the previous section. To get an idea of the available performance for different workloads, we ran a couple of quick artificial benchmarks along with a subset of our DAS test suite.

CIFS

In the first case, we evaluate the performance of a CIFS share created in a RAID 5 volume. One of the aspects to note is that the direct link between the NAS and the testbed is configured with a MTU of 9000 (compared to the default of 1500 used for the NAS benchmarks).

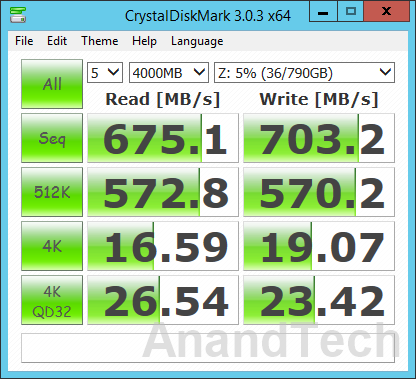

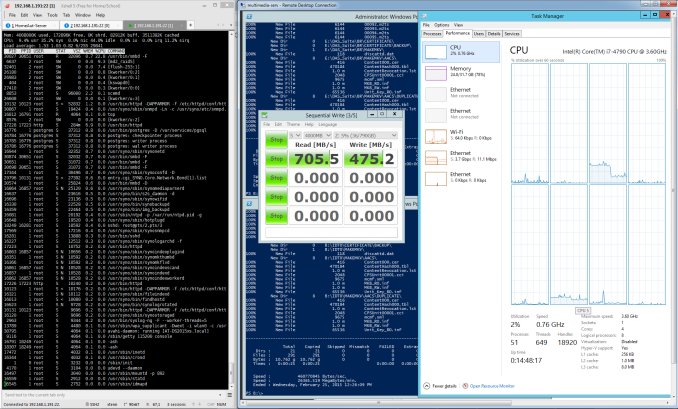

Our first artificial benchmark is CrystalDiskMark, which tackles sequential accesses as well as 512 KB and 4KB random accesses. For 4K accesses, we have a repetition of the benchmark at a queue depth of 32. As the screenshot above shows, Synology DS2015xs manages around 675 MBps reads and 703 MBps writes. The write benchmark number corresponds correctly to the claims made by Synology in their marketing material, but the 675 MBps read speeds are a far cry from the promised 1930 MBps. We moved on to ATTO, another artificial benchmark, to check if the results were any different.

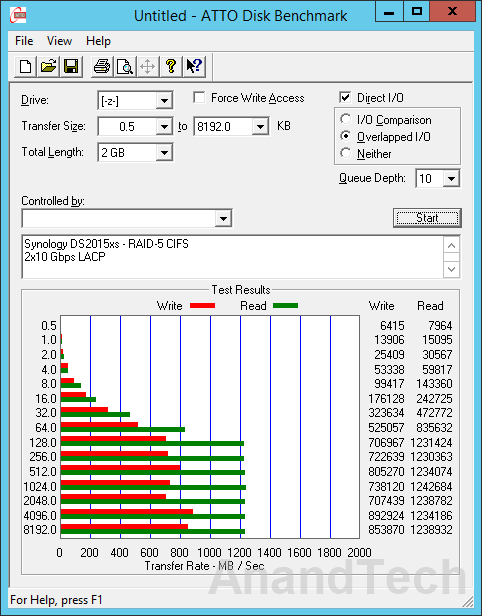

ATTO Disk Benchmark tackles sequential accesses with different block sizes. We configured a queue depth of 10 and a master file size of 4 GB for accesses with block sizes ranging from 512 bytes to 8 MB and the results are presented above. In this benchmark, we do see 1 MB block sizes giving read speeds of around 1214 MBps.

| Synology DS2015xs - 2x10 Gbps LACP - RAID-5 CIFS DAS Performance (MBps) | ||

| Read | Write | |

| Photos | 594.69 | 363.47 |

| Videos | 915.95 | 500.09 |

| Blu-ray Folder | 949.32 | 543.93 |

For real-world performance evaluation, we wrote and read back multi-gigabyte folders of photos, videos and Blu-ray files. The results are presented in the table below. These numbers show that it is possible to achieve speeds close to 1 GBps for real-life workloads. The advantage of a unit like the DS2015xs is that the 10G interfaces can be used as a DAS interface, while the other two 1G ports can connect the unit to the rest of the network for sharing the contents seamlessly with other computers.

iSCSI

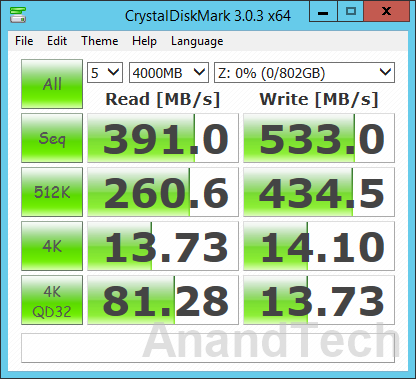

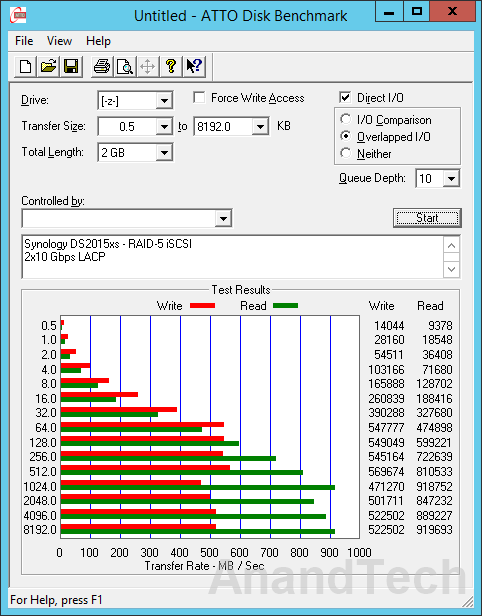

We configured a block-level (Single LUN on RAID) iSCSI LUN in RAID-5 using all available disks. Network settings were retained from the previous evaluation environment. The same benchmarks were repeated in this configuration also.

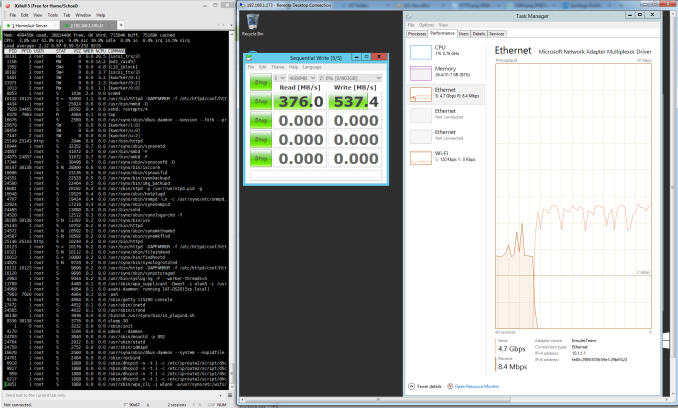

The iSCSI performance seems to be a bit off compared to what we got with CIFS. Results from the real-world performance evaluation suite are presented in the table below. These numbers track what we observed in the artificial benchmarks too.

| Synology DS2015xs - 2x10 Gbps LACP - RAID-5 iSCSI DAS Performance (MBps) | ||

| Read | Write | |

| Photos | 535.28 | 532.54 |

| Videos | 770.41 | 483.97 |

| Blu-ray Folder | 734.51 | 505.3 |

Performance Analysis

The performance numbers that we obtained with teamed ports (20 Gbps) were frankly underwhelming. The more worrisome aspect was that we couldn't replicate Synology's claims of upwards of 1900 MBps throughput for reads. In order to determine if there were any issues with our particular setup, we wanted to isolate the problem to either the disk subsystem on the NAS side or the network configuration. Unfortunately, Synology doesn't provide any tools to evaluate them separately. For optimal functioning, 10G links require careful configuration on either side.

iPerf is the tool of choice for many when it comes to ensuring that the network segment is operating optimally. Unfortunately, iPerf for DSM requires an optware package that is not yet available for the Alpine platform. On the positive side, Synology had uploaded the tool chain for Alpine on SourceForge - this helped us to cross-compile iPerf from source for the DS2015xs. Armed with iPerf on both the NAS and the testbed side, we proceeded to evaluate the links operating simultaneously without the teaming overhead.

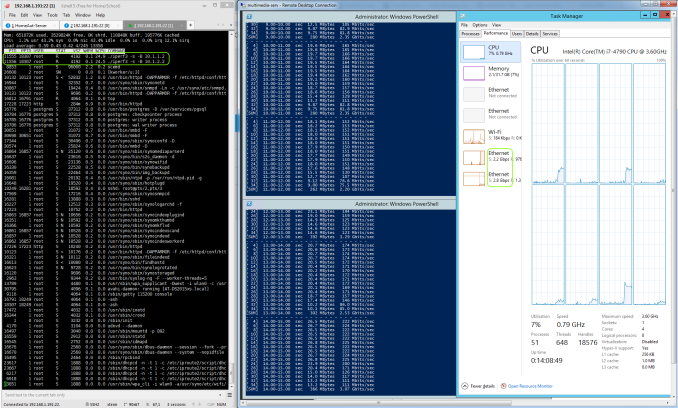

The screenshot above shows that the two links together saturated at around 5 Gbps (out of the theoretically possible 20 Gbps), but the culprit was our cross-compiled iPerf executable (with each instance completely saturating one core - 25% of the CPU).

In the CIFS case, the smbd process is not multi-threaded, and this severely affects the utilization of the 10G links fully.

In the iSCSI case, the iscsi_trx process also seems to saturate one CPU core, leading to similar results for 10G link utilization.

On the whole, the 10G links are getting utilized, but not to the full possible extent. The utilization is definitely more than, say, four single GbE links teamed together, but the presence of two 10G links had us expecting more from the unit as a DAS.

49 Comments

View All Comments

chrysrobyn - Friday, February 27, 2015 - link

Is there one of these COTS boxes that runs any flavor of ZFS?SirGCal - Friday, February 27, 2015 - link

They run Syn's own format...But I still don't understand why one would use RAID 5 only on an 8 drive setup. To me the point is all about data protection on site (most secure going off site) but that still screams for RAID 6 or RAIDZ2 at least for 8 drive configurations. And using SSDs for performance fine but if that was the requirement, there are M.2 drives out now doing 2M/sec transfers... These fall to storage which I want performance with 4, 6, 8 TB drives in double parity protection formats.

Kevin G - Friday, February 27, 2015 - link

I think you mean 2 GB/s transfers. Though the M.2 cards capable of doing so are currently OEM only with retail availability set for around May.Though I'll second your ideas about RAID6 or RAIDZ2: rebuild times can take days and that is a significant amount of time to be running without any redundancy with so many drives.

SirGCal - Friday, February 27, 2015 - link

Yes I did mean 2G, thanks for the corrections. It was early.JKJK - Monday, March 2, 2015 - link

My Areca 1882 ix-16 raid controller uses ~12 hours to rebuild a 15x4TB raid with WD RE4 drives. I'm quite dissappointed with the performance of most "prouser" nas boxes. Even enterprise qnaps can't compete with a decent areca controller.It's time some one built som real NAS boxes, not this crap we're seeing today.

JKJK - Monday, March 2, 2015 - link

Forgot to mention it's a Raid 6vol7ron - Friday, February 27, 2015 - link

From what I've read (not what I've seen), I can confirm that RAID-6 is the best option for large drives these days.If I recall correctly, during a rebuild after a drive failure (new drive added) there have been reports of bad reads from another "good" drive. This means that the parity drive is not deep enough to recover the lost data. Adding more redundancy, will permit you to have more failures and recover when an unexpected one appears.

I think the finding was also that as drives increase in size (more terabytes), the chance of errors and bad sectors on "good" drives increases significantly. So even if a drive hasn't failed, it's data is no longer captured and the benefit of the redundancy is lost.

Lesson learned: increase the parity depth and replace drives when experiencing bad sectors/reads, not just when drives "fail".

Romulous - Sunday, March 1, 2015 - link

Another benefit of RAID 6 besides 2 drives being able to die, is the prevention of bit rot. In Raid 5, if i have a corrupt block, and one block of parity data, it wont know which one is correct. However since RAID 6 has 2 parity blocks for the same data block, its got a better chance if figuring it out.802.11at - Friday, February 27, 2015 - link

RAID5 is evil. RAID10 is where it's at. ;-)seanleeforever - Friday, February 27, 2015 - link

802.11at:cannot tell whether you are serious or not. but

RAID 10 can survive a single disk failure, RAID 6 can survive a failure of two member disks. personally i would NEVER use raid 10 because your chance of losing data is much greater than any raid that doesn't involve 0 (RAID 0 was a afterthought, it was never intended, thus called 0).

RAID 6 or RAID DP are the only ones used in datacenter for EMC or Netapp.