Hands On: Apple's iMac with Retina Display

by Ryan Smith on October 16, 2014 4:15 PM EST

We just got done with our hands-on time with Apple’s new products, and we’ll start with what’s likely the sneakiest of them, the iMac with Retina Display.

Why “sneaky”? The answer is all in the HiDPI display, which Apple calls the “Retina 5K Display”. The retina display is definitely the star of the new iMac, as the rest of the hardware is largely a minor specification bump from last year’s model. In fact turned off you’d be hard pressed to tell the difference between the 2013 (non-retina) and new retina models, but the screen is immediately evident once on.

At 5120x2880 pixels, the new Retina 5K Display is precisely 4x the pixels of the 2560x1440 panel in last year’s model. What this means is that Apple can tap their standard bag of tricks to handle applications of differing retina capability and get all of it to look reasonably good. This also means that 2560x1440 content – including widgets – will scale up nicely to the new resolution. Apple does not discuss whom they have sourced the panel from, but given the timing it’s likely the same panel that is in Dell’s recently announced 27” 5K monitor.

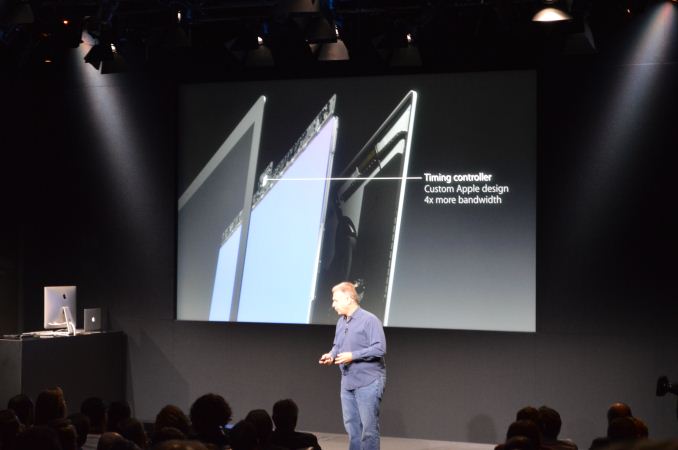

Much more interesting is how Apple is driving it. Since no one has a 5K timing controller (TCON) yet, Apple went and built their own. This is the first time we’re aware of Apple doing such a thing for a Mac, but it’s likely they just haven’t talked about it before. In any case, Apple was kind enough to confirm that they are driving the new iMac’s display with a single TCON. This is not a multi-tile display, but instead is a single 5120x2880 mode.

This also means that since it isn’t multi-tile, Apple would need to drive it over a single DisplayPort connection, which is actually impossible with conventional DisplayPort HBR2. We’re still getting to the bottom of how Apple is doing this (and hence the sneaky nature of the iMac), but currently our best theory is that Apple is running an overclocked DisplayPort/eDP interface along with some very low overhead timings to get just enough bandwidth for the job. Since the iMac is an all-in-one device, Apple is more or less free to violate specifications and do what they want so long as it isn’t advertised as DisplayPort and doesn’t interact with 3rd party devices.

Update: And for anyone wondering whether you can drive the 5K display as an external display using Target Display Mode, Apple has confirmed that you cannot.

Meanwhile driving the new display are AMD’s Radeon R9 M290X and R9 M295X, which replace the former NVIDIA GTX 700M parts. We don’t have any performance data on the M295X, though our best guess is to expect R9 285-like performance (with a large over/under). If Apple is fudging the DisplayPort specification to get a single DisplayPort stream, then no doubt AMD has been helping on this matter as one of the most prominent DisplayPort supporters.

The rest of the package is very similar to the 2013 iMac. It comes with an Intel Haswell desktop class CPU paired with 8GB or more RAM, 802.11ac support, and Apple’s SSD + HDD Fusion drive setup. Apple now offers a higher speed CPU upgrade option that goes up to 4GHz (4.4GHz Boost) – likely the Core i7-4790K – that should make the high-end iMac decently more performant than last year’s model by about 10%.

83 Comments

View All Comments

hpglow - Thursday, October 16, 2014 - link

Relying on the compiler to determine order, parallelisms, and branch predictions was too ambitious. Borderline insane to think it was possible at this point in time. Despite the large transistor count it would make a better coprocessor.widL - Thursday, October 16, 2014 - link

The i7 CPU is actually the 4790K, since the 4790 has a 3.6 Ghz base clock.TiGr1982 - Thursday, October 16, 2014 - link

That is probably the case, because their website states:"Configurable to 4.0GHz quad-core Intel Core i7 (Turbo Boost up to 4.4GHz)"

Laxaa - Thursday, October 16, 2014 - link

Most exciting Apple-announcement in a while for me. The price is surprisingly competitive as well.lilkwarrior - Friday, October 17, 2014 - link

Are you kidding me? No Displayport 1.3, No Thunderbolt 3, & not even Broadwell? They didn't even bother adding USB Type-C.They should have done what they did with the Mac Pro last year and have such things part of the product and just allowed people to pre-order.

This device still does admittedly blows alternatives like the Dell 5K monitor for mainstream professionals w/o intensive power needs (otherwise they would've opted for a specialized workstation or a Mac Pro).

SirKnobsworth - Friday, October 17, 2014 - link

There are no DP 1.3 or USB Type-C products on the market right now and probably won't be for several months. The TB3 spec has not been finalized and won't be until next year with Skylake. The retina iMacs use desktop class processors which Intel is skipping with Broadwell. I really don't see what the problem is.jameskatt - Friday, October 17, 2014 - link

ARE YOU KIDDING US??Duh: Displayport 1.3, Thunderbolt 3, USB Type-C and Intel's Broadwell Desktop CPUs are ALL VAPORWARE. They aren't out yet. Double Duh.

Apple cannot make products out of vaporware. That is why they are not in the new iMac.

In fact, since no one else makes a 5K Timing Controller for the monitor, even Apple had to design manufacture the timing controller chip itself.

jameskatt - Friday, October 17, 2014 - link

The Displayport 1.3 standard was just released in September 2014. So no one has the parts for it yet. Apple had to custom modify the internal DisplayPort 1.2 hardware to run it like Displayport 1.3 internally.abrowne1993 - Thursday, October 16, 2014 - link

Too bad this thing isn't using the 970/980M from Nvidia.When are we going to see displays with DisplayPort 1.3? Should be able to do 5K with no compromises, right?

anandreader106 - Thursday, October 16, 2014 - link

"Too bad this thing isn't using the 970/980M from Nvidia."I'm pretty sure Apple weighed the pros and cons of using the 970/980M. Ultimately the better deal was to partner with AMD. We will found on the reasons for this partnership in the future, but for now I wouldn't jump to conclusions and assume that the consumer was better off with an Nvidia part.