Intel and Micron (IMFT) Announce World's First 128Gb 20nm MLC NAND

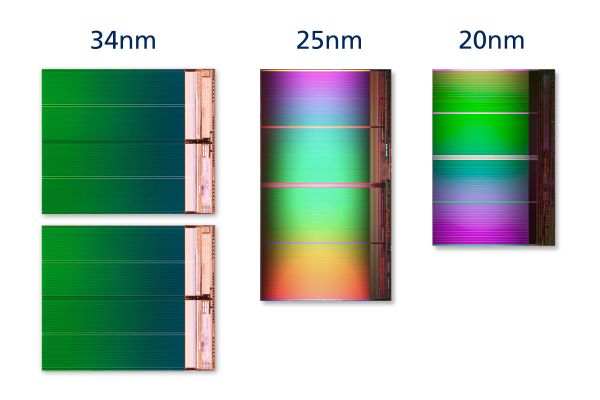

by Anand Lal Shimpi on December 6, 2011 12:17 PM ESTEarlier this year, Intel and Micron's joint NAND manufacturing venture (IMFT) announced it had produced 64Gb (8GB) MLC NAND on a 20nm process. Most IMFT NAND that's used in SSDs are built using a 25nm process - the move to 20nm reduces die size and in turn reduces cost over time. A single 8GB 2-bit-per-cell MLC NAND die built on IMFT's 25nm process measures in at 167mm2, while the same capacity on IMFT's 20nm process is 118mm2. Early on in any new process wafers are more expensive, but over time NAND costs should go down as they are more a function of die size than process technology.

Today Intel and Micron are both announcing a second generation 20nm part at a 128Gb (16GB capacity). This isn't just a capacity increase as the 128Gb 20nm MLC NAND features an ONFi 3 interface rather than ONFI 2.x used in the earlier 64Gb announcement and older 25nm parts. ONFI 3 increases interface bandwidth from a max of 200MT/s (IMFT 25nm was limited to 166MT/s) to 333MT/s. This has a direct impact on sequential read performance for example, as most of those operations tend to be interface bound. Note that when I'm talking about interface speed I'm referring to the maximum speed allowed between the SSD controller and the NAND itself (e.g. an 8-channel ONFI 3 controller would have 8 x 333MT/s interfaces to NAND).

Alongside the faster interface speed is yet another increase in page size. The move to 25nm NAND brought about 8KB pages (up from 4KB), the 128Gb 20nm MLC NAND solution uses 16KB pages. Because of the changes to the interface speed and page size we won't see drives/controllers use the 128Gb devices for another 1 - 1.5 years. The reason for the delay in incorporating this NAND into SSDs is two fold: 1) A 128Gb 20nm die will be pretty big (2x the size of a 64Gb die), and it'll take time for yields to improve to the point where it's cost effective, and 2) a larger page size and new interface both require a revision to the controller & firmware. Intel and Micron have both confirmed that they will not be using the 128Gb parts in SSDs until 2013.

The 128Gb 20nm parts will go into mass production in Q2 2012, giving IMFT ample time to ramp up production and work on yields before more functional deployment inside SSDs. SSD makers (and other consumers of NAND) will be able to get octal die packages using 128Gb NAND, meaning a single package can feature up to 128GB of NAND. This also paves the way for 1TB SSDs with only eight chips, or 2TB SSDs with both sides of a 2.5" PCB populated. The new Ultrabook/MacBook Air gumstick SSD form factor will be able to accommodate 512GB - 1TB of NAND using these 128Gb ODP devices.

While 128Gb parts are still some distance away, 64Gb 20nm NAND from IMFT is in mass production today. Again, don't expect to see the 64Gb parts in use in SSDs until the middle of 2012 however. Controller vendors need time to ensure support for the NAND and validate/cross-their-fingers-and-hope-it-works with their designs. IMFT also needs time to build up inventory and ensure good yields. Remember the 64Gb parts retain the ONFI 2.x support and 8KB page size of IMFT's 25nm NAND.

The big question is endurance, however we won't see a reduction in write cycles this time around. IMFT's 20nm client-grade compute NAND (used in consumer SSDs) is designed for 3K - 5K write cycles, identical to its 25nm process. IMFT have moved to a new cell architecture with a much thinner floating gate. The 20nm process is also a high-K + metal gate design, both of which contribute to maintaining endurance ratings while shrinking overall transistor dimensions.

SSDs have fallen in prices tremendously over the past few years. We're finally approaching $1/GB in many cases. With 20nm NAND due out next year, I'd say we're probably within a year of dropping below $1/GB.

35 Comments

View All Comments

DukeN - Tuesday, December 6, 2011 - link

Great, another sucker for the EV socialist propaganda.This EV, environment-saving, green-this/green-that stuff is another excuse to rob us blind by Obama style communists.

Vote Bachmann next year to restore honor to our Christian Nation.

*Drives off in Hummer H2 chowing down 18oz steak firing machine guns into the horizon*

[\sarcasm]

Paul Tarnowski - Wednesday, December 7, 2011 - link

The only reason EV will ever really take off is because it's cheaper and you can run off battery longer. I know that seeing the running time gains on my UPS between running a 65W e6750 and a 65W i3 (mostly in chipset and idle, although the e6750 was overclocked), not to mention the switch between a >45W CF backlit TN and a <20W LED e-IPS, had provided me with even more incentives to upgrade.Also, what's wrong with 18oz. steaks? (looks it up) 18 ounces is over half a kilo? Well...what's wrong with 510 gram steaks? Some of us are more meat eater than others. Now if you had included something with carbs, I'd be in agreement. That stuff will kill you.

Although I am feeling a little trepidatious about the steaks firing machine guns into the horizon. I'm not sure I can get behind an armed steak firing into the horizon. In fact, that could get annoying, I'd hate to see good ammo wasted. What steak has enough ammo just lying around to fire off indiscriminately? If you ain't practicing or killing, you ain't doing it right. Ammo ain't cheap, you know.

Wierdo - Thursday, December 8, 2011 - link

There's some evidence suggesting that eating more than 11oz of red meat per week can play a role in contracting some types of cancer. Otherwise, it's a good source of protein, iron and antioxidants.GruntboyX - Tuesday, December 6, 2011 - link

I will have to admit I was one of those RAID-0 guys. But that was when SSD were priced much higher than they are today.I see no reason why your system drive shouldn't be a SSD now that 120GB is reasonably priced.

In fact I think this pushing me to build my first file server. low power, Magnetic medium for bulk data storage and redundancy. SSD for the main computer for speed and low noise.

With these process improvements, I see the future is near in which magnetic medium will be relegated to long term and bulk storage. However, Its great because it puts pricing pressure on magnetic medium.

IvanAndreevich - Wednesday, December 7, 2011 - link

Dumbest post I've read today.LordanSS - Tuesday, December 6, 2011 - link

I apologize for my "noobness", but this has got me thinking for a while now.Cluster size for, say, NTFS partitions has been at around 4k for a while now (the default format size, let's put it that way). Years ago, the page size on these NAND devices was 4k as well, being then a "perfect match".

Now with the process and capacity changes... page sizes up to 8k (and in the near future, 16k)... is there any drawback on performance or anything if you're still using a 4k cluster size, and saving small files (4k and smaller) still? Degradation, wasted space, anything?

Thanks

Paul Tarnowski - Wednesday, December 7, 2011 - link

I'm too sleepy to Google the specifics, so this is off the top of my head:The only possibility is that degradation speeds up, as the OS won't address the a page for multiple files. And even there, depending on the implementation, the SSD controller should take care to spread the data over other, less used cells*. Otherwise you won't see any notable difference except size on disk for small files.

iwod - Tuesday, December 6, 2011 - link

We are currently being limited by Interface speed, Connection Speed and Controller ability to achieve them.Giving us ONFI 3.0 NAND dont have much different to normal SSD users. It may be more useful to USB sticks.

We need the above three point to improve. I am hoping to see 1GB/s Consumer SSD soon.

handzilla - Tuesday, December 6, 2011 - link

Hello everyone.i was just wondering if we are at that point where computing should get cheaper instead of faster.

Is it worth paying hundreds of dollars more for a few seconds more off boot times? i believe most of us are waiting for a few seconds (instead of minutes) for apps to launch, right?

i suppose that a lot of us would rather suspend their computer, instead of shutting it down completely so we don't have to reboot... then re-launch apps all over again?

So, are things fast enough? How much more dollars are we willing to spend for "instant on?" And once "instant on" (or the perception of it) is achieved, what's next?!

Would it be better for OEMs to focus more on making things cheaper?

It seems to me that we have reached the point where RAM and HDD/SSD speeds, capacities & cost, are now more important considerations than the number of cores the CPU has?

When will we see SSDs within the CPU die?! Not likely, right? Then again, was it only AMD who had the vision to have GPUs on the CPU die?

Will our cellphones replace our desktops/laptops? If yes, demand for microSDs (or something else with ultra-faster transfer rates) will eclipse demand for SSDs. Think about it: with mutli-core ARM CPU powered cellphone, and virtualization already here, we will soon see Windows 8, 9, etc. within a VM which is running on Android 5, 6, 7...

As for display, perhaps a tablet-cellphone-charger-dock?! Btw, Archos has a tablet with a 250GB, so we could see a tablet-cellphone-charger-file server-dock soon?!

SSDs will probably be too late and too expensive?

Just some thoughts.

Paul Tarnowski - Wednesday, December 7, 2011 - link

Yes and no. Instant-on is worth more to the casual user who wants to treat their computing hardware as an appliance than it is to the enthusiast. It's just that only the enthusiasts like us are exposed enough to the market to have SSDs get on their radar.It could even be argued backwards. The enthusiast prefers sustained speed and size, which can be gotten with a well maintained RAID-0 configuration. The casual user wants bursts of speed (low seek time) and will feel safer keeping the family picture and video collection on an external HDD, which is much more in line with an SSD (and the reason why RAID-0 never took off in the general market but SSDs will). For a personal example, as a casual phone user, I find the time it takes my Android to boot up aggravating, but before I had an SSD I didn't mind the time it would take to reboot.

Suspending the computer is fine and all, but even there, unless the computer was set up for it, the casual user will not know how. And if they do do it, they consider it to be a great undertaking and achievement and spend days if not weeks congratulating themselves (I have first-hand experience).

So over-all, it's not a matter of spending more dollars. Casual users (who only work in Office, browse and check email) don't need more than 60GB on their computers right now, with exceptions being some who may need 90GB. And because they will also happily tote about with an external HDD, particularly since it makes them feel safer about their data, more internal space is generally not needed.

In a lot of ways, the evolution of GPUs resembles FPUs, except that GPUs had longer (more generations, not necessarily longer in the time sense) to maturate before being incorporated onto the CPU.

Strictly speaking, in the sense that a component of the CPU is rendering the page into memory, no, it wasn't AMD that first thought to have the GPU* on the CPU, not even in the PC world. In fact, early on, the x86 architecture drove all graphics, and there was no GPU, just a video (CGA, EGA and VGA. I believe SVGA required an accelerator) encoder. In fact, any CPU could drive the graphics today, it's just that the complexity has increased exponentially.

What happened was that the GPU first evolved as a separate video accelerator on the PC (I still remember my ATI card fondly. It could anti-alias TrueType fonts in Windows 3.1) only after seeing the gains of having a chip dedicated to graphics on workstation and consumer machines like the Commodore Amiga**. Even then the first 3D generated graphics were CPU bound. The only philosophical difference now is that the CPU has a dedicated component for video acceleration and 3D rendering, whereas before it was just the (integer bound) CPU muddling through.

SSDs within the die are not going to happen until everything else is on the CPU die, if ever. Even non-cache memory on die is rare (or in the same package); I can't think of any examples off the top of my head, not ones which have the same sizes as is bog-standard even in the low-end of the PC market. It's most likely that future generations of memory are going to be stackable with the CPU, and it's still up in the air whether Universal memory will be practical before SSDs are adapted to the stack.

Cellphones will not replace dedicated computers any time soon. Not when they are at least a decade behind in power and definitely not unless the size limitations of interfacing with them are overcome.

* It wasn't called a GPU. Was no such thing. But then again, early CPUs started out with only integer units, and you had to do your floating point through the integer processors.

** I always get a tear in the eye whenever I read that name or write Amiga. Ah, the nostalgia.