Context-aware Computing, Intel Paints the Future of Devices

by Anand Lal Shimpi on September 15, 2010 12:51 PM EST- Posted in

- Trade Shows

- CPUs

- Intel

- Gadgets

Traditionally a large focus of IDF is looking at future computing trends. For the longest time the name of the game was convergence. The shift to mobility highlighted many IDF keynotes in years past. Today, in Intel's final keynote of the show, Justin Rattner began by talking about context-aware computing.

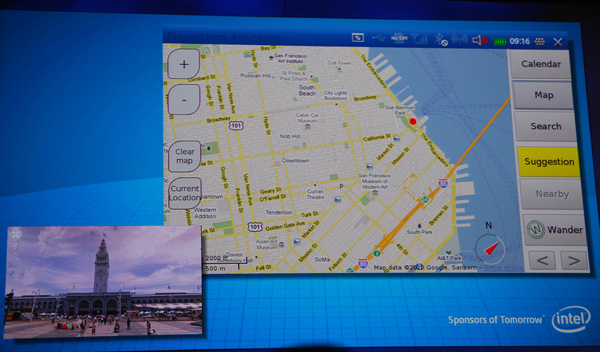

The first demo was the most compelling - an application called the personal vacation assistant.

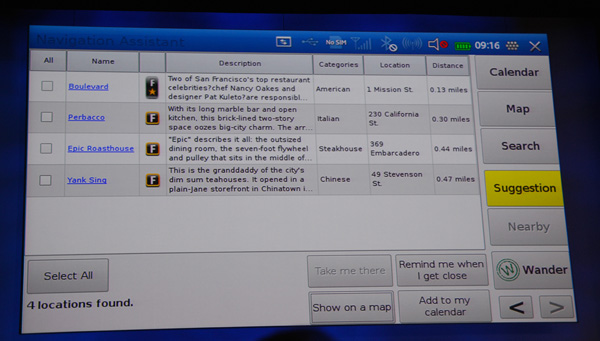

The personal vacation assistant takes information your device already knows about you. Things like your calendar, current GPS location as well as personal preferences for things like food.

The app then takes this data and can provide you with suggestions of things to do while you're on vacation. Based on your current GPS coordinates and personal preferences, the app can automatically suggest nearby restaurants for you. It can also make recommendations on touristy things to do in the area, again based on your location.

The personal vacation assistant has an autoblog feature. It can automatically blog your location as you move around, post photos as you take them, and even provide links to all of the places you've visited. Obviously there are tons of privacy issues here but the concept is cool.

The idea is within the next few years, all devices will support this sort of context aware computing. While the personal vacation assistant shows location aware computing, there are other vectors to innovate upon. Your device (laptop, smartphone, tablet) can look at other factors like who you're with or what you're doing. Your smartphone could detect a nearby contact, look at both of your personal preferences and make dining/activity recommendations based on that information.

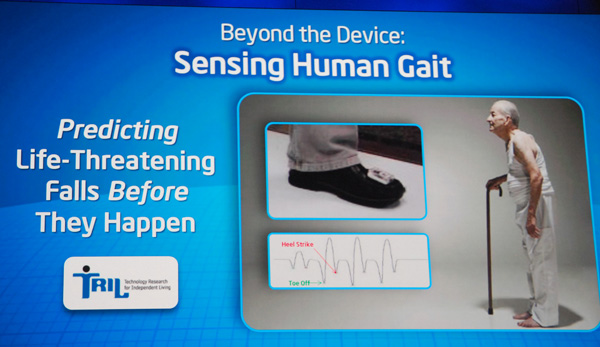

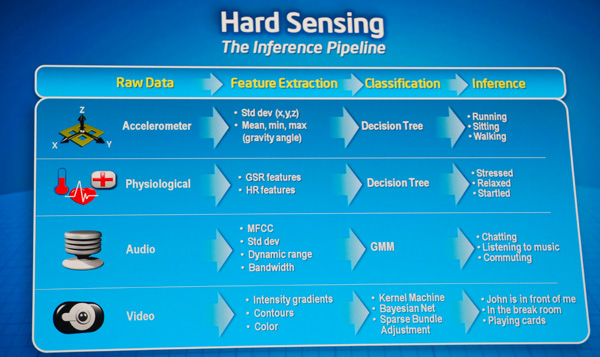

Modern smartphones already have hardware to detect movement (e.g. accelerometer), the next step is using that to figure out what the user is doing. This could apply to things like detecting when you're running, figuring out that you may be hungry afterwards and have your phone supply you with food recommendations next.

Motion sensors in a smartphone could also detect things like whether or not the user has fallen and automatically contact people (or emergency services) in the address book.

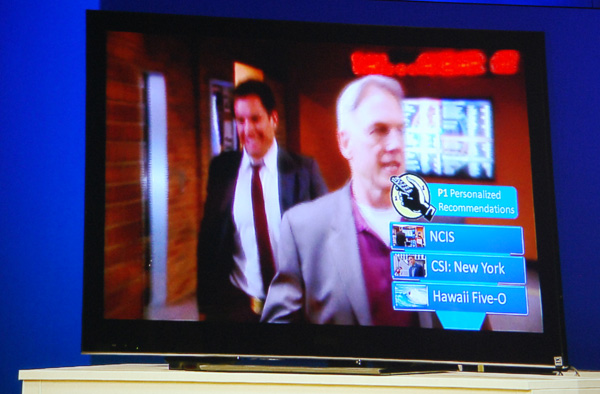

Context-aware computing can also apply to dumb devices like remote controls. In his next demo, Justin Rattner showed a TV remote control that made show/channel recommendations based on who is holding it.

The remote control determined who the user is by the manner in which the person held it.

This all depends on having a ton of sensors available and combining it with compute power. Intel was also careful to point out that context-aware computing must be married to security policies to avoid this very-personal information from automatically being shared with just anyone.

Personally I want my smartphone, notebook and desktop to work for me a little more autonomously. Intel talked about the idea that your phone could make a recommendation for what you should eat at a restaurant based on your level of activity for the day and the restaurant's online menu (determined by your GPS location and an automatic web search). Obviously this depends on nutritional information being shared in a uniform format by the restaurant itself, but the upside is pretty neat.

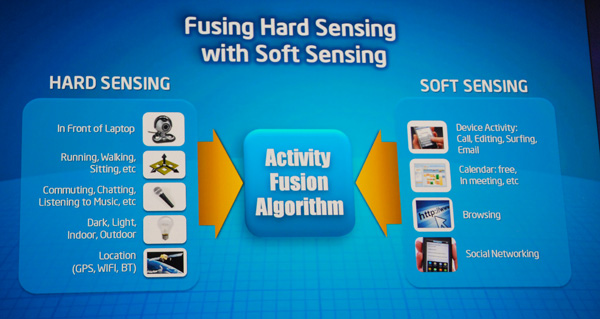

Hard sensing is important (location, physical attributes) but combining that with soft sensing (current running applications, calendar entries, etc...) is key to making context-aware computing work as best as possible.

Context-aware computing feels a lot like the promises of CE/PC convergence from several years ago. I do believe it will happen, and our devices will become much more aware of what we're doing, but I suspect that it'll be a several years before we start seeing it as commonplace as convergence is today.

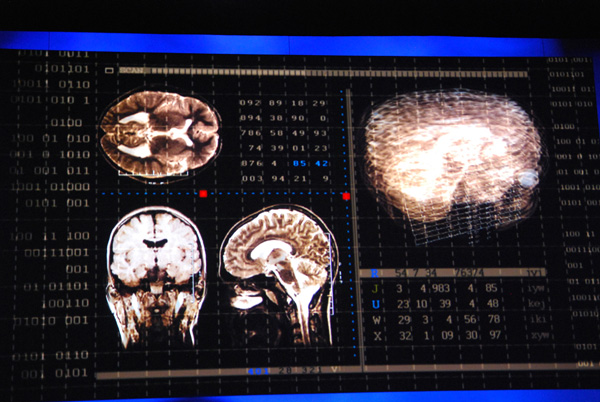

Justin Rattner ended the keynote with a look into the next 5 - 10 years of computing. Intel is working with CMU researchers on sensing brain waves. Feeding the results of those types of sensors into computing devices can enable a completely new level of context aware computing. That's the holy grail after all, if your smartphone, PC, or other computing device is not only aware of your external context but what you're thinking.

21 Comments

View All Comments

evelyn - Saturday, November 27, 2010 - link

http://www.uggbootspp.com/