Apple's M1 Pro, M1 Max SoCs Investigated: New Performance and Efficiency Heights

by Andrei Frumusanu on October 25, 2021 9:00 AM EST- Posted in

- Laptops

- Apple

- MacBook

- Apple M1 Pro

- Apple M1 Max

CPU MT Performance: A Real Monster

What’s more interesting than ST performance, is MT performance. With 8 performance cores and 2 efficiency cores, this is now the largest iteration of Apple Silicon we’ve seen.

As a prelude into the scores, I wanted to remark some things on the previous smaller M1 chip. The 4+4 setup on the M1 actually resulted that a significant chunk of the MT performance being enabled by the E-cores, with the SPECint score in particular seeing a +33% performance boost versus just the 4 P-cores of the system. Because the new M1 Pro and Max have 2 less E-cores, just assuming linear scaling, the theoretical peak of the M1 Pro/Max should be +62% over the M1. Of course, the new chips should behave better than linear, due to the better memory subsystem.

In the detailed scores I’m showcasing the full 8+2 scores of the new chips, and later we’ll talk about the 8 P scores in context. I hadn’t run the MT scores of the new Fortran compiler set on the M1 and some numbers will be missing from the charts because of that reason.

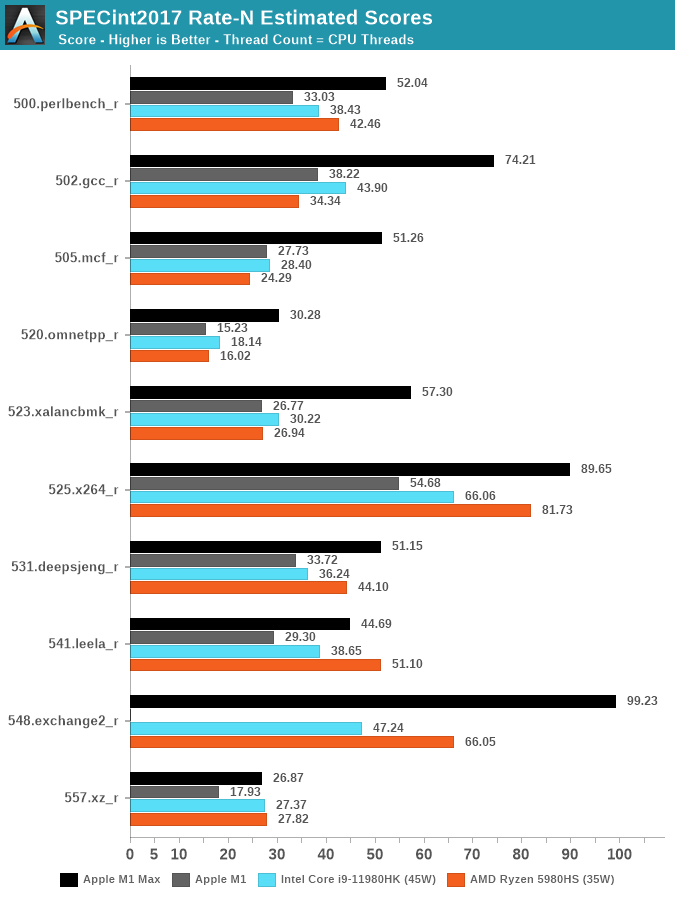

Looking at the data – there’s very evident changes to Apple’s performance positioning with the new 10-core CPU. Although, yes, Apple does have 2 additional cores versus the 8-core 11980HK or the 5980HS, the performance advantages of Apple’s silicon is far ahead of either competitor in most workloads. Again, to reiterate, we’re comparing the M1 Max against Intel’s best of the best, and also nearly AMD’s best (The 5980HX has a 45W TDP).

The one workload standing out to me the most was 502.gcc_r, where the M1 Max nearly doubles the M1 score, and lands in +69% ahead of the 11980HK. We’re seeing similar mind-boggling performance deltas in other workloads, memory bound tests such as mcf and omnetpp are evidently in Apple’s forte. A few of the workloads, mostly more core-bound or L2 resident, have less advantages, or sometimes even fall behind AMD’s CPUs.

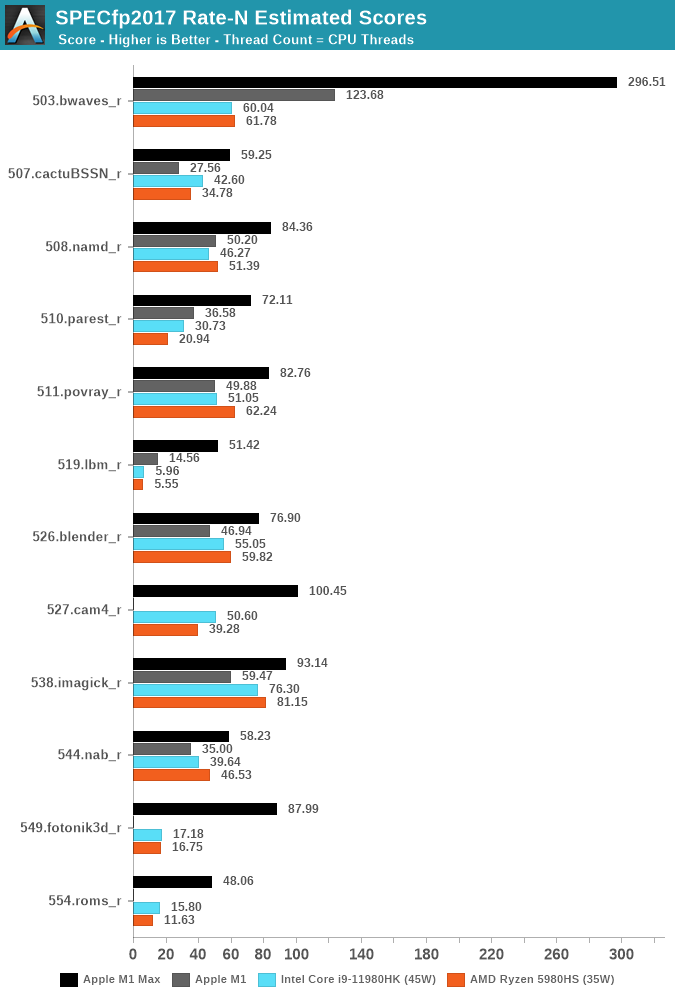

The fp2017 suite has more workloads that are more memory-bound, and it’s here where the M1 Max is absolutely absurd. The workloads that put the most memory pressure and stress the DRAM the most, such as 503.bwaves, 519.lbm, 549.fotonik3d and 554.roms, have all multiple factors of performance advantages compared to the best Intel and AMD have to offer.

The performance differences here are just insane, and really showcase just how far ahead Apple’s memory subsystem is in its ability to allow the CPUs to scale to such degree in memory-bound workloads.

Even workloads which are more execution bound, such as 511.porvray or 538.imagick, are – albeit not as dramatically, still very much clearly in favour of the M1 Max, achieving significantly better performance at drastically lower power.

We noted how the M1 Max CPUs are not able to fully take advantage of the DRAM bandwidth of the chip, and as of writing we didn’t measure the M1 Pro, but imagine that design not to score much lower than the M1 Max here. We can’t help but ask ourselves how much better the CPUs would score if the cluster and fabric would allow them to fully utilise the memory.

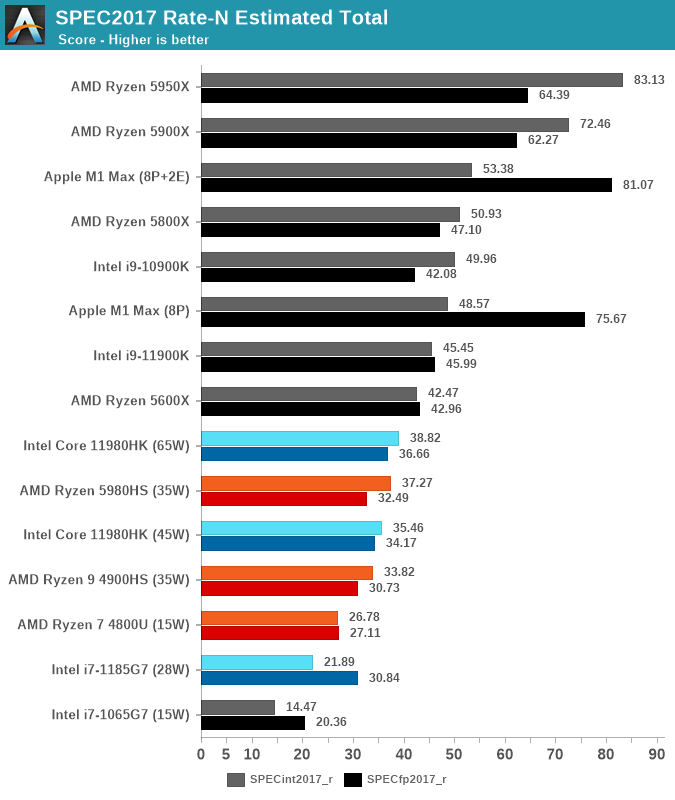

In the aggregate scores – there’s two sides. On the SPECint work suite, the M1 Max lies +37% ahead of the best competition, it’s a very clear win here and given the power levels and TDPs, the performance per watt advantages is clear. The M1 Max is also able to outperform desktop chips such as the 11900K, or AMD’s 5800X.

In the SPECfp suite, the M1 Max is in its own category of silicon with no comparison in the market. It completely demolishes any laptop contender, showcasing 2.2x performance of the second-best laptop chip. The M1 Max even manages to outperform the 16-core 5950X – a chip whose package power is at 142W, with rest of system even quite above that. It’s an absolutely absurd comparison and a situation we haven’t seen the likes of.

We also ran the chip with just the 8 performance cores active, as expected, the scores are a little lower at -7-9%, the 2 E-cores here represent a much smaller percentage of the total MT performance than on the M1.

Apple’s stark advantage in specific workloads here do make us ask the question how this translates into application and use-cases. We’ve never seen such a design before, so it’s not exactly clear where things would land, but I think Apple has been rather clear that their focus with these designs is catering to the content creation crowd, the power users who use the large productivity applications, be it in video editing, audio mastering, or code compiling. These are all areas where the microarchitectural characteristics of the M1 Pro/Max would shine and are likely vastly outperform any other system out there.

493 Comments

View All Comments

arglborps - Friday, March 25, 2022 - link

Exactly. In the world of video editing suites Premiere is the slowest, buggiest piece of crap you can think of, not really a great benchmark except for how fast to crash an app.DaVinci and Final Cut run circles around it.

ikjadoon - Monday, October 25, 2021 - link

AnandTech literally tested the M1 Max on PugetBench Premiere Pro *in this article*. Surprise, surprise 955 points on standard, 868 on extended, thus just 4% slower than a desktop 5950X + desktop RTX 3080."biggest problem with the Apple eco system" Huh? Premiere Pro has already been written in Apple Silicon's arm64 for macOS. It's been months now.

>We’ll start with Puget System’s PugetBench for Premiere Pro, which is these days the de facto Premiere Pro benchmark. This test involves multiple playback and video export tests, as well as tests that apply heavily GPU-accelerated and heavily CPU-accelerated effects. So it’s more of an all-around system test than a pure GPU test, though that’s fitting for Premiere Pro giving its enormous system requirements.

You clearly did not read the article and a misinformed "slight" against Apple's SoC performance: "These benchmarks disagree with my narrative, so I need to change the benchmarks quickly now."

I don't get why so many people are addicted to their "Apple SoCs can't be good" narrative that they'll literally ignore:

1) the AnandTech article that benchmarked what they claimed never got benchmarked

2) the flurry of press when Adobe finally ported Premiere Pro to arm64

easp - Monday, October 25, 2021 - link

So if one can't really compare "real-world" benchmarks between platforms how are you so sure that Mac's fall-short?sirmo - Monday, October 25, 2021 - link

We aren't shore of anything. Why are we even here?SarahKerrigan - Monday, October 25, 2021 - link

Sure, OEM submissions are mostly nonsense. SPEC is a useful collection of real-world code streams, though. We use it for performance characterization of our new cores, and we have an internal database of results we've run inhouse for other CPUs too (currently including SPARC, Power, ARM, IPF, and x86 types.) Run with reasonable and comparable compiler settings, which Anandtech does, it's absolutely a useful indicator of real world performance, one of the best available.schujj07 - Monday, October 25, 2021 - link

You are the first person I have talked to in industry that actually uses SPEC. All the other people I know have their own things they run to benchmark.phr3dly - Monday, October 25, 2021 - link

I'm in the industry. As a mid-sized company we can't afford to buy every platform and test it with our workflow. So I identify the spec scores which tend to correlate to our own flows, and use those to guide the our platform evaluation decisions.Looking at specific spec scores is a reasonable proxy for our own workloads.

0x16a1 - Monday, October 25, 2021 - link

uhhhh.... SPEC in the industry is still used. SPEC2000? Not anymore, and people have mostly moved off of 2006 too onto 2017.But SPEC as a whole is still a useful aggregate benchmark. What others would you suggest?

sirmo - Monday, October 25, 2021 - link

It's a synthetic benchmark which claims that it isn't. But it very much is. Anything that's closed source and compiled by some 3rd party that can't be verified can be easily gamed.Tamz_msc - Tuesday, October 26, 2021 - link

LOL more dumb takes. Majority of the benchmarks are licensed to SPEC under open-source licenses.https://www.spec.org/cpu2017/Docs/licenses.html