The OpenPOWER Saga Continues: Can You Get POWER Inside 1U?

by Johan De Gelas on February 24, 2017 8:00 AM ESTTyan's GT75-BP012

Getting to the meat of today's article, we have the Tyan GT75-BP012. Anton has already described the Tyan GT75 servers in great detail here, so we will recap and add a few details.

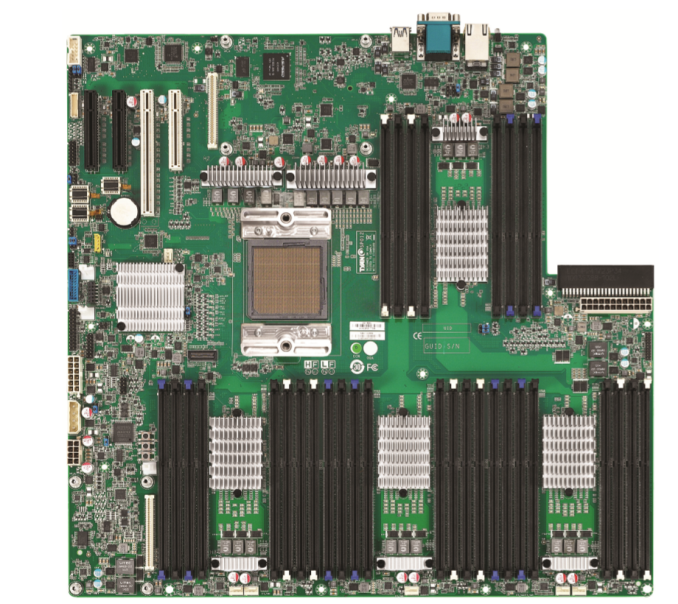

The Tyan GT75 machines (just like the Tyan TN71-BP012 servers launched a year ago) are based on one IBM POWER8 Turismo Single Chip Module (SCM) processor, offering either eight or ten cores. This CPU finds itself paired with Tyan's Habanero motherboard, the same as in IBM's most affordable OpenPOWER server, the S812LC.

The board has 32 DIMM slots using four IBM Centaur memory buffer chips (MBCs). Since the operational voltage of the Centaur chip PHY maxes out at 1.43V, only low power DDR3 DIMMs are supported. The largest supported DIMMs are the quad ranked 32 GB DIMMs with 4 Gbit chips, allowing the server to have up to 1 TB of RAM. Unfortunately, the latest 8 Gbit based DIMMs are not supported. Tyan ships the server with eight 16 GB DIMMs – for a total of 128GB – if you take the standard configuration.

| Tyan GT75: IBM POWER8 Turismo CPU Options | |||||

| POWER8 8-Core | POWER8 10-Core | ||||

| Core Count | 8 | 10 | |||

| Threads | 64 | 80 | |||

| Nominal Freq. Turbo |

2.33 GHz 3.025 GHz |

2.095 GHz 2.926 GHz |

|||

| L2 Cache | 512 KB per core | 512 KB per core | |||

| L3 Cache | 8 MB eDRAM per core 64 MB per CPU |

8 MB eDRAM per core 80 MB per CPU |

|||

| DRAM Interface | DDR3L-1600 (Low Power Only) | ||||

| PCI Express | 3 × PCIe controllers, 32 lanes | ||||

| TDP | 130W 169W |

130W 169W |

|||

As the OpenPOWER POWER8 has to fit and operate within a 1U home, the clockspeed is limited to 2.328 GHz nominal. However, that is just a paper spec just like the clockspeed of the Xeon E5. In reality, the power governor defaults to "on demand". In that case, the CPU runs at 2.06 GHz at low load, and boost up to 3.025 GHz when the CPU is fully loaded. The speedsteps are very small, only +/- 30 MHz, so the second highest speedstep is 2.99 GHz. Below you find the configuration table of all Tyan GT75 servers.

| Comparison of Tyan GT75 Servers | ||||

| BSP012G75V4H-B4C | BSP012G75V4H-Q4T | BSP012G75V4H-Q4F | ||

| CPU | IBM POWER8 8-Core 2.328 GHz 130 W/169 W TDP |

IBM POWER8 10-Core 2.095 GHz 130 W/169 W TDP |

IBM POWER8 10-Core 2.095 GHz 130 W/169 W TDP |

|

| Installed RAM | 8 × 16 GB R-DDR3L | 16 × 16 GB R-DDR3L | 32 × 16 GB R-DDR3L | |

| RAM (subsystem) | Up to 1 TB of DDR3L-1333 DRAM, 32 RDIMM modules, four IBM Centaur MBCs | |||

| Storage | 2 × 512 GB SSDs | 2 × 1 TB SSDs | 4 × 1 TB SSDs | |

| Tyan Storage Mezzanine | MP012-9235-4I (4-port SATA 6Gb/s IOC w/o RAID stack) |

|||

| LAN | 4 × GbE ports | 4 × 10 GbE ports | 4 × 10 GbE ports | |

| Tyan LAN Mezzanine | MP012-5719-4C Broadcom 1GbE LAN Mezz Card |

MP012-B840-4T Qlogic+Broadcom 10GbE LAN Mezz Card- |

MP012-Q840-4F Qlogic 10GbE LAN Mezz Card | |

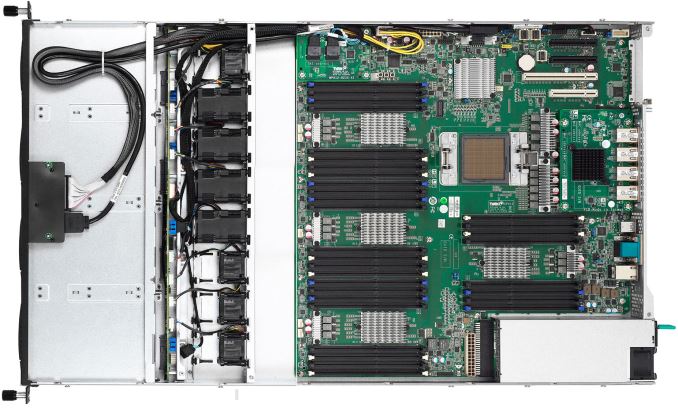

In today's article we're review the basic model, the BSP012G75V4H-B4C. Notice the twelve (!) fans.

The Tyan GT75-BP012 makes use of Tyan's mezzanine cards for networking and for the storage controller. As a result, it can equipped with up to four 3.5” hot-swappable SATA 6G HDD/SSDs and four network controllers (1 GbE or 10 GbE) without using the 8-lane PCIe riser.

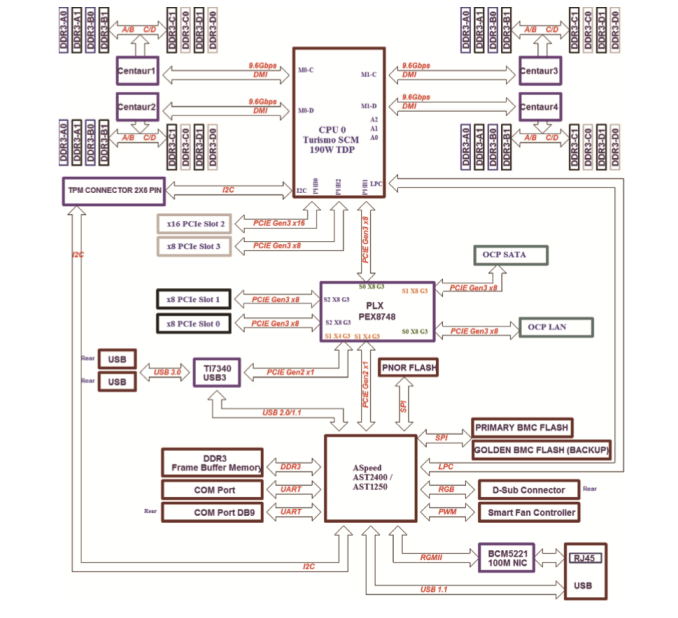

Now if you've been counting the CPIe lanes required for all of this, it seems like we should be a bit short, and indeed that's the case. Digging a bit deeper, we'll find that the server is using a PLX PEX8748 PCIe switch to take a PCIe 3.0 x8 root port from the CPU and switch it among the LAN riser, SATA riser, and the two black PCIe x8 slots.

28 Comments

View All Comments

Zzzoom - Friday, February 24, 2017 - link

"As important as performance per watt is, several markets – HPC, Analytics, and AI chief among them – consider performance the most important metric. Wattage has to be kept under control, but that is it."What a load of garbage.

JohanAnandtech - Saturday, February 25, 2017 - link

And now maybe some arguments that substantiate your opinion?SarahKerrigan - Sunday, February 26, 2017 - link

In HPC specifically, power consumption is a major issue. This was the entire root of the success of the Blue Gene line back in the day, and why NEC is shifting its supercomputing CPUs to progressively more efficient cores instead of higher-performance cores now (SX-9: 102.4GF/core; SX-ACE: 64GF/core.) . HPC is sensitive to running cost, and power dissipation is a critical factor in that.Zzzoom - Monday, February 27, 2017 - link

Go read the 7+ years worth of materials from the EE HPC Working Group.JohanAnandtech - Wednesday, March 1, 2017 - link

In a system with 2-4 GPUs, 512 GB of RAM, the TDP of the CPU is not a dealbreaker. I can agree that some HPC markets are more sensitive to perf/watt; but I have seen a lot of examples where raw performance per dollar was just as important.Zzzoom - Wednesday, March 1, 2017 - link

POWER8 TDP is 45W-102W higher per socket than the highest spec Xeon E5. That's 90W-204W higher per node where each node consumes 1500W-2000W, or 6-10% total on a site with a multi-million dollar power bill that went to great lengths to bring down the PUE by a similar amount. So for anyone to pick POWER8 it has to do better on energy to solution through its unique features, or be considerably cheaper (ha!). POWER8's advantage is NVLink, but TSUBAME3 going with Intel+PLX switches on top of NVLink shows that it's not that big of a deal.Anyway, the efficiency requirements on the CORAL procurements are pretty strict so scale-out POWER9+Volta will have to shed a lot of weight.

Zzzoom - Wednesday, March 1, 2017 - link

I forgot about the memory buffers. It's even worse.mystic-pokemon - Sunday, March 5, 2017 - link

Guys, I know shit ton of stuff about a server Johan listed above. He has a point when he says Power consumption is only so much important.In short, when you combine all aspects to TCO model: POWER8 server delivers most optimal TCO value

We consider all the following into our TCO model

a) Cost of ownership of the server

b) Warranty (Lesser than conventional server, different model of operations)

c) What it delivers (How many independent threads (SMT8 on POWER8 remember ? 192 hardware threads), how much Memory Bandwidth (230 GBPs), how much total memory capacity in 1 server ( 1 TB with 32 GB)

d) For a public cloud use-case, how many VMs (with x HW threads and x memory cap / bw ) can you deliver on 1 POWER8 server compared to other servers in fleet today ? Based on above stats, a lot .

e) Data center floor lease cost in DC ( 24 of these servers in 1 Rack, much denser. Average the lease over age of server: 3 years ). This includes all DC services like aggers, connectivity and such.

f) Cost per KWH in the specific DC ( 1 Rack has nominal power 750W)

All this combined POWER has good TCO. Its a massively parallel server, what where major advantage comes from. Choose your workload wisely. That's why companies continue to work on it.

I am talking about all this without actually combining with CAPI over PCIe and openCAPI. Get it ? POWER is going no where.

Michael Bay - Friday, February 24, 2017 - link

I think at this point in time intel has more to fear from goddamn ARM than IBM in server space.Okay, maybe AMD as well.

JohanAnandtech - Friday, February 24, 2017 - link

Personally I think OpenPOWER is a viable competitor, but in the right niches (In memory databases, GPU accelerated + NVlink HPC). Just don't put that MHz beast in a far too small 1U cage. :-)