Microsoft Lifts the Lid on Some Intel Skylake-EP Details via the Open Compute Project

by Ian Cutress on November 1, 2016 3:00 PM EST- Posted in

- CPUs

- Intel

- Open Compute

- Purley

- Azure

- LGA3647

- Project Olympus

- Skylake-SP

As part of the European Digital Infrastructure Summit in London this week, Microsoft's Azure team will be lifting the lid on Project Olympus, the next generation hyperscale cloud hardware design and model for open source hardware development, in collaboration with the Open Compute Project (OCP). Project Olympus is being described as a way to embrace future platforms in a standardized design, much like other OCP projects, and the open source standards behind the platform are offering insights into Intel’s Skylake-EP Platform, known as Purley.

It seems odd for information about Skylake-EP to be sanctioned at this time (technically none of the documents mention Intel, Skylake or Purley, but it can be deciphered as below), especially given the recent release of Broadwell-E/EP and Intel’s previous stance of limited data release prior to launch. It would appear that the information Microsoft are providing at the summit has been sanctioned, however sometimes putting two plus two together requires a little digging.

All this information is posted on the Microsoft Azure blog, which links directly to the OCP pages where motherboard specifications and server mechanical specifications are provided in PDF format.

It’s a Socket We’ve Seen Before, Which Can Make Waves

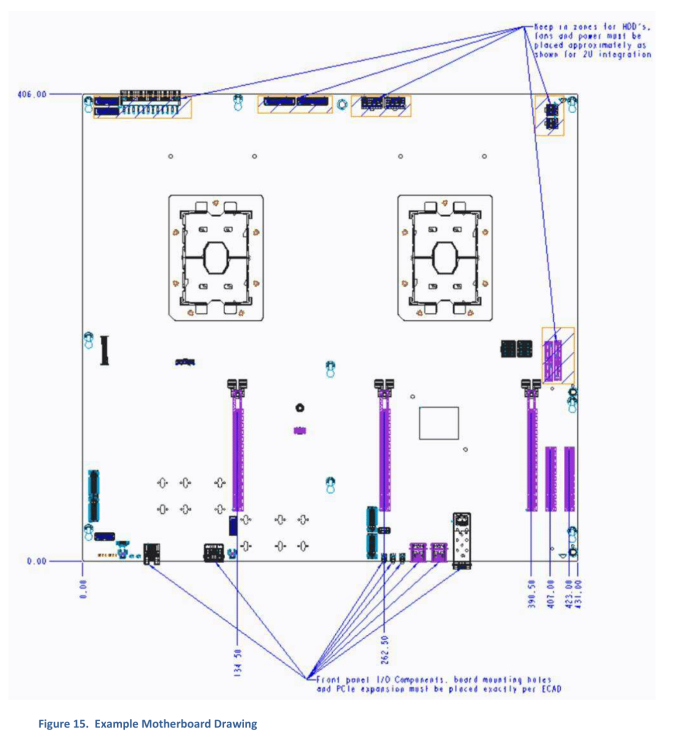

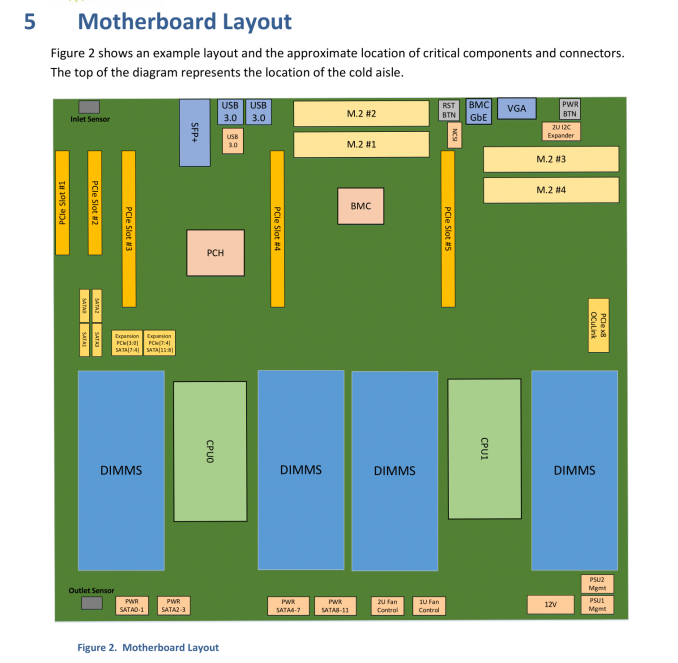

Being the motherboard guy at AnandTech, I skipped straight to the motherboard information. Page 31 of the motherboard document gives the following example motherboard drawing:

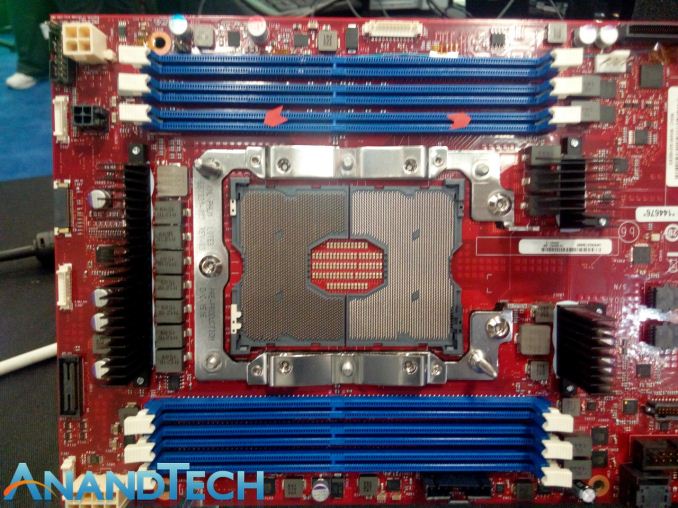

Here is a dual-socket design, with a set of PCIe slots, some power connectors and other I/O components (some fiber Ethernet, for example). But jam packed in the middle are two very large sockets. We’ve seen these before, back at Supercomputing 2015 when Intel announced Knights Landing, which is a Xeon Phi product:

Xeon Phi's Knights Landing design uses an LGA3647 implementation based on its 72 cores, 16GB of MCDRAM, and six memory channels. There are a lot of pins because there’s a lot to power up.

In previous generations of EP processors, both EP and E have shared the same socket. If I wanted to use my E5-2699 v4 Broadwell-EP LGA2011-3 processor in an X99 consumer motherboard instead of an i7-6950X, I could. Essentially all the Core and all the Xeon E5 CPUs have shared a common socket, making it easy for 1P/2P/4P processors to share around. If Purley / Skylake-EP uses the LGA3647 socket, this means one of four things.

The first is that this might not be Skylake-EP, and something odd like Skylake-EN.

The second is that Skylake-E will also share the same socket, and be LGA3647. This sounds somewhat implausible, given that Skylake-EP will have to handle at least the same amount of cores as Broadwell-EP, so there would be a lot of pins on a consumer platform for no reason.

The third possibility is that Skylake-E and Skylake-EP are going to be different sockets. This would indicate a split between consumer HEDT and dual-socket workstations and servers. Given previous generational changes, Skylake-E for consumers is expected to follow a similar pattern – around 2000 pins in a single socket design. However if Skylake-EP is making the jump to a significantly larger socket, especially for 2P workstation and server designs, it will produce a spread of difference in potential for pricing structures and implementation.

The fourth possibility extends from the third, and that Skylake-EP will have two socket designs depending on the size of the core. For Broadwell-EP, there were three designs for the silicon underneath – a low core count (LCC), a medium core count (MCC) and an extreme core count (XCC). If Intel are splitting up the sockets, it may be the case that only the XCC or MCC+XCC sides of the equation are using LGA3647. LCC designs are typically used for the consumer E series parts anyway, so Intel may decide to make the low core designs of EP on the smaller socket. There’s a wealth of possibility here.

I Heard You Like RAM. I Heard You Like Storage.

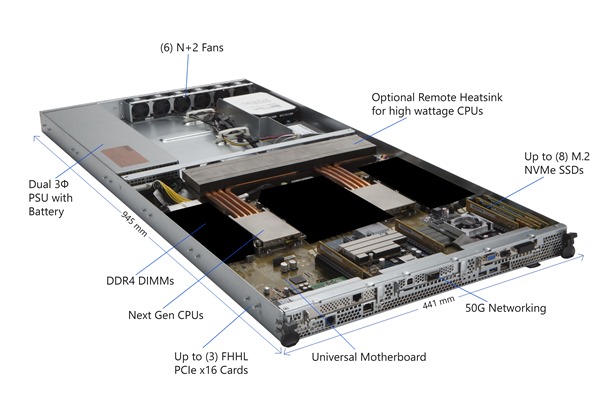

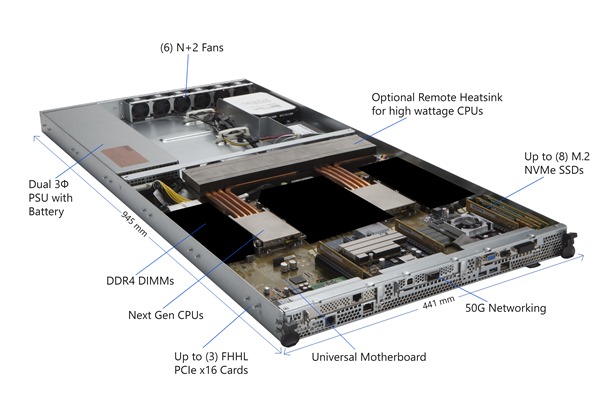

On the main Microsoft Azure page, a handy diagram of an example machine was provided (with some areas blacked out):

Here we see that motherboard from the image above, using two low profile heatsinks with copper piping feeding an optional heatsink inside the chassis. To the sides of each of the sockets are big black squares, indicating where the DDR4 memory should go. Nearer the bottom of the board are networking implementations (50G is labeled), and PCIe slots suitable for three full-height, half-length (FHHL) PCIe cards. Interestingly on the right-hand side, we have labeled ‘up to 8 M.2 NVMe SSDs’.

Back to the motherboard specification, we see the list of blacked out areas along with a more comprehensive sequence of potential configurations:

There are spots for up to 32 DIMMs, which makes 16 per socket. Depending on how many memory controllers the CPU has, this could mean 8-channel and 2 DIMMs per channel (DPC), or 4-channel and 4 DPC. Nowhere does it state the maximum DRAM support per CPU, but DDR4 LRDIMMs are currently at 256GB/module, meaning a potential maximum of 4TB per CPU or 8TB per system. We expect Skylake-EP to support 3D XPoint at some stage as well.

On that list of support also mentions up to 12 SATA devices, up to 3 FHHL cards, and two PCIe x8 slots capable of supporting two M.2 modules each. So this is where that 8x M.2 comes in – if we get four from two PCIe x8 slots, and combine this with up to four M.2 direct attach modules, that makes eight.

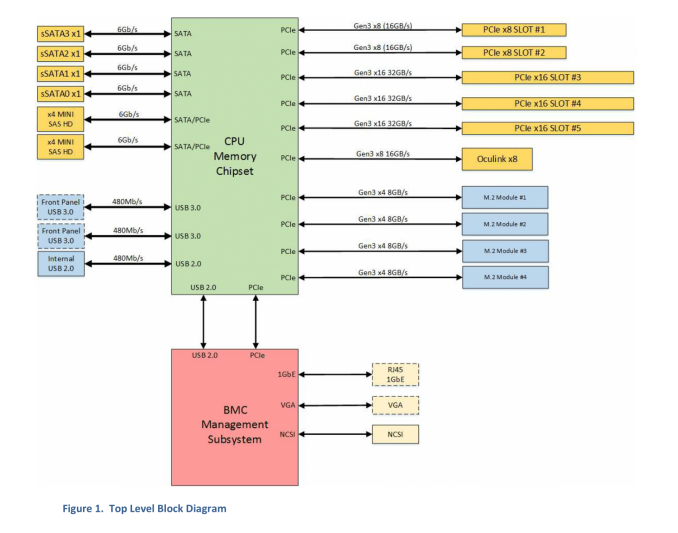

The top-level block diagram is also worth a look. Patrick from STH specifically points out the PCIe 3.0 support for the platform:

On the right-hand side, adding up all the PCIe numbers and it comes to 88 PCIe 3.0 lanes, or 44 per CPU. This would be an upgrade on the 40 lanes per CPU currently on Broadwell-EP. There is also provision for PCIe lanes to be used with the mini-SAS connectors on the left-hand side. Technically the BMC also requires a PCIe link as well.

So When?

Given the long product cycles of Intel’s EP platforms, and the fact that the Big Seven cloud providers have a lot of clout over sales means they are most likely testing and integrating the next generation hardware. The release to the public, and smaller players in the OCP space, is a long and slow one. We’re not expecting Skylake-E/EP out until well into 2017, if not the year after, so information will certainly be on the slow burn. Supercomputing 2016 is happening in Utah in a couple of weeks, and although we won’t be there due to scheduling, something may pop out of the woodwork. We’ll keep our eyes peeled.

Source: Microsoft, ServeTheHome

Related Reading

SuperComputing 15: Intel’s Knights Landing / Xeon Phi Silicon on Display

A Few Notes on Intel’s Knights Landing and MCDRAM Modes from SC15

The Next Generation Open Compute Hardware: Tried and Tested

The Intel Xeon E5 v4 Review: Testing Broadwell-EP With Demanding Server Workloads

15 Comments

View All Comments

Kevin G - Wednesday, November 2, 2016 - link

Intel indicated plans for 1 TB 3D Xpoint DIMMs so there is still a capacity advantage there.Magichands8 - Wednesday, November 2, 2016 - link

When? By 2050 DRAM will have had plenty of time to catch up. Intel has already had to delay Optane once, at least publicly, and the fact that they've gone completely silent about the technology suggests to me that they may have run into some serious road blocks. I'm starting to think Intel was telling the truth when they said that 3D XPoint was not PCM memory. So far it seems to be constructed entirely of press releases.eSyr - Tuesday, November 1, 2016 - link

There were some good indications ( http://wccftech.com/intel-skylake-e-lga-3647-hexa-... http://wccftech.com/massive-intel-xeon-e5-xeon-e7-... ) that Skylake-EP will support 6-channel memory, so this is rather odd that MS tries to pretend that nobody knows it and says "up to 32 dimms" instead of "up to 36 dimms" (6 channels, 3 DPC for each of 2 packages). 4 DPC is very unlikely, i'm not really sure it can be ever feasible without additional buffers.edzieba - Wednesday, November 2, 2016 - link

Holy guacamole, Oculink actually turning up in something!Kevin G - Thursday, November 3, 2016 - link

I wonder if any of the DIMM slots were to be dedicated for 3D Xpoint DIMMs only. For example, each socket would have twelve DDR4 + four 3D Xpoint DIMM slots. Double up the socket count to get 32 DIMMs total. This could be handled in two different ways: the extra 3D Point slots as an extra slot across four memory channels (two 2 DPC + four 3 DPC) or simply dedicated memory channels to 3D Xpoint (four 2 DPC DDR4 + two 4 DPC 3D Xpoint). Either way that'd produce 32 DIMMs on a dual socket system without the need for a memory buffer.